-

近年来,随着移动自组网技术、协同控制技术、传感探测技术、人工智能技术的飞速发展,无人机群逐渐显示出群体智能分布式、自组织性、非合作式的特点。从军事角度而言,无人机群技术的发展蕴含着对传统联合防空系统的挑战,甚至将引发防空装备体系的颠覆性变革。随着无人机群技术的不断发展,反无人机群技术逐渐变得复杂[1-2]。及时检测出袭扰的无人机群,可以采取丰富的反制措施。因此,发展无人机群目标检测识别技术,是实现反无人机战场态势感知的前提和关键。

随着红外探测技术不断发展,相较于普通可见光系统,红外系统具有良好的环境适应能力,尤其在云雾等极端恶劣天气环境下,红外系统适应性表现更加明显。由于红外探测成像主要依靠目标与背景之间的温度和发射功率,在目标检测识别能力上,红外系统优于普通可见光系统。因此,红外探测在目标检测识别与特性感知能力上优于普通可见光探测。无人机类目标具有飞行高度低,飞行速度慢的特点,单阶段检测算法和两阶段检测算法对无人机类目标通用检测效果不好,因此,许多研究人员对基础检测网络进行了改进。王建楠等[3]引入多个不同采样率的空洞卷积,增强无人机的多尺度细节特征提取能力。刘闪亮等[4]提出双锥台特征融合结构,来提升无人机检测精度。张灵灵等[5]融合高层语义信息和浅层细节信息,并引入通道注意力机制,提升了对无人机小目标特征提取的能力。在深层和浅层特征融合基础上,马旗等[6]利用残差网络及多尺度特征融合的方式,提高无人机检测精度。上述方法是检测单架无人机的目标检测方法,而无人机群分布较为密集,且图像中无人机群目标容易出现遮挡,检测无人机群目标时,需要考虑检测网络对无人机目标的特征提取。周强等[7]采用轻量化网络Mobilenetv2,并调整网络通道数,提高了算法对多无人机目标的检测准确度。张瑞鑫等[8]为提升无人机群检测精度和定位质量,在CenterNet检测网络中,引入解耦的非局部算子。祁江鑫等[9]为了提高无人机集群检测精度,使用轻量化网络MobileNetv3,降低原网络的参数量和计算量。Wang等[10]针对现有无人机检测算法检测精度低、计算资源消耗大的问题,提出了一种基于YOLOX的轻量级无人机群检测方法。上述方法在基础检测网络中进行改进,得到了较好的检测模型,但是上述文献未考虑无人机群成员之间位置、边界框大小等信息,而将无人机作为独立目标进行检测,容易导致漏检、误检无人机群目标的问题。无人机群成员的状态不仅与自身有关,还与邻居成员有关联。因此,利用无人机群成员之间的联系,可以有效减少漏检、误检群成员的情况,实现无人机群队形结构特性感知。基于以上分析,在红外探测和YOLOv5算法的基础上,文中提出了基于红外探测的无人机群队形结构特性感知方法。

-

无人机群具有编队队形、群成员之间相互联系、群间联系等结构特性,其中面对不同任务需求,无人机群可以采用不同的编队队形。在采取反制无人机群措施之前,得知无人机群队形,结合相关信息,找出无人机群的重要通讯节点,使得反制后无人机群因失去作战能力而无法完成任务,进一步提升反制效果和效率。因此,感知无人机群编队队形是实现战场态势感知的前提。文中利用红外探测系统探测无人机群,采集无人机群数据,并采用改进的无人机群目标检测算法检测出无人机群成员,识别出无人机群,实现无人机群队形结构的感知。在红外探测和YOLOv5算法的基础上,文中提出了无人机群队形结构特性感知算法,称为GMR-YOLOv5算法。该算法通过空间-深度转换模块[11](Space-to-Depth Non-strided Convolution,SPD-Conv)与通道注意力模块[12](Channel Attention Net,CAN)融合,设计空间深度-通道注意力(Space to Depth-Channel Attention Net, SD-CAN)模块。空间-深度转换模块可将无人机的特征从空间维度转换到通道维度,与通道注意力机制相比,它没有注重通道间的关联性,文中设计的空间深度-通道注意力模块,既能实现目标特征空间维度到通道维度的转换,又能关注通道中无人机的特征;同时,针对无人机群成员在红外图像中纹理特征不明显的问题,构建群成员关系(Group Member Relation, GMR)模块。该模块充分利用无人机群成员在图像中的位置、边界框大小等结构信息,将无人机群成员的结构信息融入到群成员之间关联信息中。与现有的目标检测算法相比,文中提出的群成员关系模块考虑了图像中无人机群成员的位置、边界框大小等信息;最后,将这两个构建的模块融合到YOLOv5基础网络。在自建无人机群数据集上,开展算法验证实验。实验结果证明,文中提出的GMR-YOLOv5算法有效提高了无人机群目标的检测精度,减少了检测无人机群目标时漏检、误检情况,实现了无人机群队形结构特性感知。

-

GMR-YOLOv5算法是在原始YOLOv5网络的基础上引入空间深度-通道注意力模块和群成员关系模块,引入后网络结构如图1所示。图1中,红色框模块为空间深度-通道注意力模块,绿色框模块为群成员关系模块。首先,在Neck部分,将Concat层保持在SPD层和CAN层之间,更好地利用经过卷积层提取的特征图。空间深度-通道注意力模块引入YOLOv5的网络结构中,增加网络对目标的关注度,提高网络对无人机特征的提取能力。其次,无人机群成员的外观、大小相似,利用图像中群成员之间的位置、尺寸大小等结构信息,构建群成员关系模块,将无人机群成员进行关联,不作为单独目标进行检测。文中将群成员关系模块添加到已经引入空间深度-通道注意力模块的YOLOv5网络输出端,该输出端既能输出无人机目标的位置几何信息,又能输出尺寸大小特征。

-

在较为复杂的低空背景下,无人机群成员目标尺度较小。YOLOv5检测网络中卷积下采样率较大,容易造成无人机目标的特征信息丢失。空间-深度转换模块将无人机的特征从空间维度转化到通道维度,只是把无人机特征通道维度拼接在一起,然后进入步长为1的卷积层,未进一步考虑特征通道中无人机的特征。因此,文中将注意力机制引入到空间-深度转换模块中,并构建空间深度-通道注意力模块,使检测网络更加关注通道中无人机的特征。在YOLOv5网络中引入空间深度-通道注意力模块,提高网络对无人机目标的特征提取能力。

空间深度-通道注意力模块由空间到深度(Space-to-depth, SPD)层 、通道注意力层以及非跨步卷积(Non-strided Convolution)层构成,其结构如图2所示。SPD层将图像特征的空间维度转换为通道维度,非跨步卷积层将无人机目标特征图的通道维度发生改变(其中$ {C_3} < 4{C_1}{\text{ = }}{C_2} $),如图2(f)、(g)所示。为了尽可能保留无人机目标特征图的所有判别特征信息,这里选择$ stride{\text{ = }}1 $的卷积层。当$ stride > 1 $时,容易导致特征信息的非歧视性丢失,进而影响检测效果。为进一步利用通道中无人机目标的特征,在SPD层和非跨步卷积层之间加入通道注意力机制。空间维度转换为通道维度的转换过程如公式(1)和图2(b)~(d)所示。SPD层将特征映射图按比例切割成成序列的子特征映射图后,再把这些子特征映射图沿通道维度连接起来,从而体现出空间到深度的特点。考虑任意大小为$ S \times S \times {C_1} $的中间特征映射$ X $,切出成序列的子特征映射为:

图 2 $ scale{\text{ = }}2 $时的空间深度-通道注意力结构图

Figure 2. Structure of space to depth-channel attention net at $ scale{\text{ = }}2 $

$$ \left\{ \begin{array}{c}{f}_{0,0}=X[0:S:scale,0:S:scale]\\ {f}_{1,0}=X[1:S:scale,0:S:scale],\cdots \text{,}\\ {f}_{scale-1,0}=X[scale-1:S:scale,0:S:scale]\\ {f}_{0,1}=X[0:S:scale,1:S:scale]\\ {f}_{1,1}\text=X[1:S:scale,1:S:scale]\text{,}\cdots \text{,}\\ {f}_{scale-1,1}=X[scale-1:S:scale,1:S:scale]\\ \vdots\\ {f}_{0,scale-1}=X[0:S:scale,scale-1:S:scale]\\ {f}_{1,scale-1}\text=X[1:S:scale,scale-1:S:scale]\text{,}\cdots \text{,}\\ {f}_{scale-1,scale-1}=X[scale-1:S:scale,scale-1:S:scale]\end{array} \right.$$ (1) 式中:给定任何原始特征映射$ X $;子映射${f_{x,y}}$由能被切割比例因子整除的所有元素$X(i,j)$构成;$ scale $为切割比例因子。

-

复杂运动背景下,无人机群成员纹理特征弱,目标外观和结构容易与噪声混淆,无人机群成员与相邻成员在图像中的空间位置相对固定。无人机群成员的状态不仅与自身有关,还与邻居成员有联系,群成员关系模块利用这种群成员之间的联系,减少漏检、误检无人机的情况。

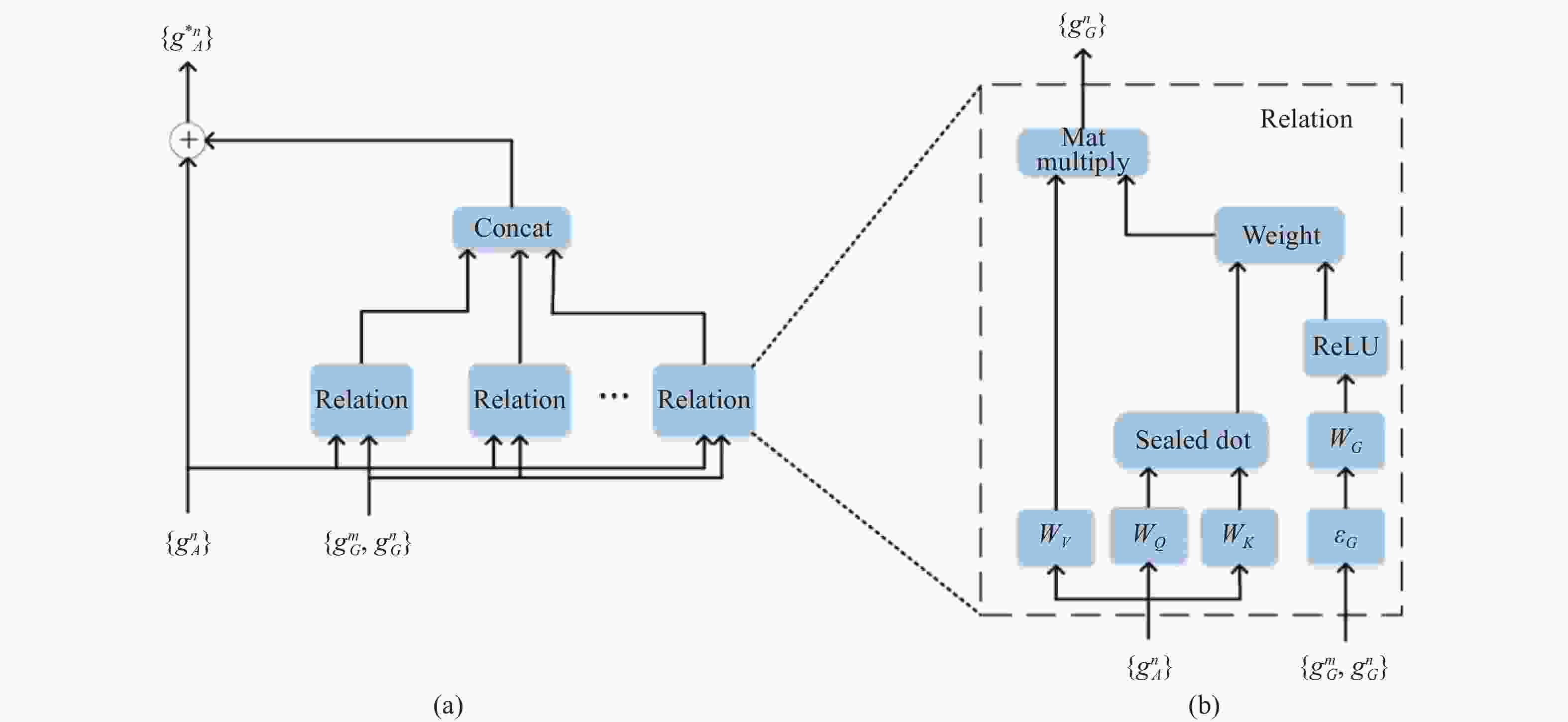

现有目标检测方法未考虑无人机群成员的内在联系,容易出现漏检、误检群成员的情况。充分利用群成员之间的关联信息是减少漏检、误检群成员的关键,Hu等[13]提出了物体间联系的结构。基于此,文中充分利用无人机群成员在图像中的位置、尺寸等结构信息,设计GMR模块,其结构如图3(a)所示。该模块将无人机群成员的外观特征和几何特征作为输入,输出为关系特征。GMR模块未将无人机群成员作为独立目标进行检测,而是把无人机群成员联系在一起。当图像中某个无人机目标未被检测出来,邻居成员已经被检测出来时,通过GMR模块,充分利用周围成员的位置、尺寸大小等结构信息,确定该目标是无人机群成员,进而减少无人机群目标检测的漏检、误检情况。

图3(a)中,$ g_A^n $表示第$n$个无人机目标的外观特征,包括无人机的形状,大小等特征;$ g_G^n $表示第$n$个无人机目标的几何特征,包括无人机的位置和边界框的大小;$ g{\text{*}}_A^n $表示第$n$个关系模块的输出。Relation为关系特征,利用所有无人机的两个特征($ g_A^n $和$ g_G^n $),得到不同的关系特征后进行拼接,并和无人机原特征信息进行融合。关系特征结构如图3(b)所示,图中$ {W_V} $、$ {W_Q} $、$ {W_K} $、$ {W_G} $都表示线性变换,改变无人机特征的维数,Weight表示权重$ {\omega ^{mn}} $,dot表示点乘,$g_R^n$表示关系特征的输出,无人机群成员关系模块和关系特征可以由公式(2)、(3)分别表示为:

$$ \mathrm{g}_A^{* n}=g_A^n+{Concat}\left[g_R^1(n), \cdots, g_R^{N_r}(n)\right], \text { for all } n$$ (2) $$g_R(n)=\sum_m \omega^{m n} \cdot\left(W_V \cdot g_A^m\right) $$ (3) 式中:$ g{\text{*}}_A^n $为第$n$个关系模块的输出,文中的$ {N_r} $根据无人机群中无人机数量设置为20;${g_R}(n)$为关系特征的输出。

-

为加速损失的收敛速度,文中采用SIoU (SCYLLA-IoU, SIoU) Loss[14]函数。SIoU Loss包含角度损失,距离损失,形状损失以及IoU损失。其中,角度损失使网络的收敛速度更快,目标检测定位效果更好。SIoU Loss定义为:

$$ {L_{{\rm{SIoU}}}} = {W_{box}}{L_{box}} + {W_{cls}}{L_{cls}} $$ (4) 式中:${W_{box}}$、${W_{cls}}$分别表示框和分类损失的权重;${L_{cls}}$表示Focal Loss[15]函数;${L_{box}}$表示回归损失函数。

Focal Loss函数的定义为:

$$ {L_{{\rm{Focal}}{\text{ }}{\rm{Loss}}}} = \left\{ {\begin{array}{*{20}{c}} { - {{(1 - \hat p)}^\gamma }\log(\hat p)\;\;\;{\text{ if }}\;y{\text{ = 1}}} \\ { - {{\hat p}^\gamma }\log(1 - \hat p)\;\;\;{\text{ if}}\; y{\text{ = 0}}} \end{array}} \right. $$ (5) 式中:$ \hat p $为目标预测概率;$ \gamma $为可调节因子;$ y $为label。

回归损失函数${L_{box}}$的定义为:

$$ {L_{box}}{{ = 1 - }}{\rm{IoU }}+ \frac{{\Delta + \varOmega }}{2} $$ (6) 式中:$\Delta $表示距离损失;$\varOmega $表示形状损失。${\rm{IoU}}$可由公式(7)表示:

$$ {\rm{IoU}} = \frac{{\left| {B \cap {B^{GT}}} \right|}}{{\left| {B \cup {B^{GT}}} \right|}} $$ (7) 式中:$B$表示目标框;${B^{GT}}$表示回归框。

-

本节介绍数据集构建方法并设计无人机群目标检测算法验证实验、算法对比实验和消融实验。

-

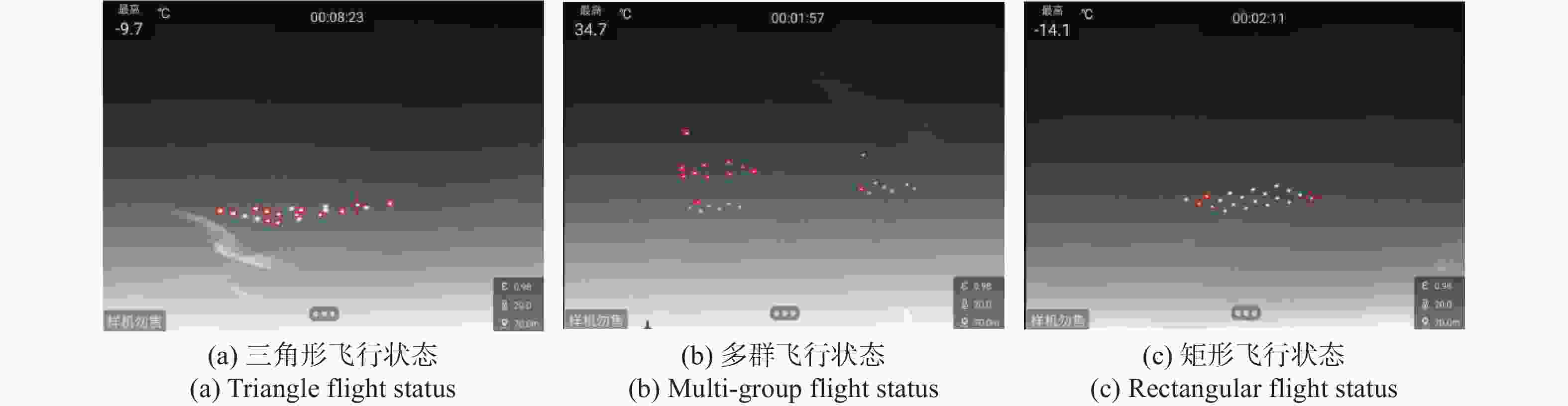

为开展无人机群目标检测算法验证实验,文中构建了一种无人机群数据集Drone-swarms Dataset (DSD)。目前,尚未有开源的无人机群数据集,无人机群中无人机数量较多,较难获取优秀的无人机群数据集。文中利用红外相机拍摄无人机群多种飞行状态下的序列视频,对视频进行截取,得到无人机群飞行图像,再进行有效筛选,获取6900张红外图像的无人机群数据集。Drone-swarms Dataset数据集采用PASCAL VOC标注格式,其中70%用于训练,30%用于测试。无人机群数据集的图像数量、图像分辨率、图像中无人机目标数量、尺寸等信息如表1所示。数据集中的无人机群有三角形、矩形、圆形、多群等多种飞行状态,如图4所示。

表 1 数据集信息

Table 1. Dataset information

Title Norm Dataset name Drone-swarms Dataset Number of images 6900 sheet Resolution of an image/pixel 960×540 Number of UAV 20-25 racks UAV target size/pixel 9×8-13×11 -

文中实验平台的配置如表2所示,实验是在Drone-swarms Dataset数据集上开展,根据YOLOv5的训练要求修改配置文件,设置表3中的参数值,包括epoch值、衰减系数、Batch size、学习率等。实验选择目标检测的平均精度(Mean Average Precision, mAP)、检测速度(Frames Per Second, FPS)作为评价检测算法性能的指标。

表 2 实验平台的配置

Table 2. Configuration of the experimental platform

Title Norm Operating system Ubuntu 18.04 Processing unit Intel Xeon Gold 6230×2 Graphics board NVIDIA RTX 8000 ×2 RAM 192 G(32 G×6) DDR4 2933 MT/s Development environment Python 3.6

PyTorch 1.10.0

CUDA 11.4表 3 参数设置

Table 3. Parameter settings

Parameter name Parameter size Epoch 300 Attenuation factor 0.0005 Learning rate 0.001 Model optimizer Adam Batch size 8 -

为验证文中提出的算法的有效性,相同实验环境下,在Drone-swarms Dataset数据集上,文中提出的算法和YOLOv5两种算法开展对比测试实验。实验结果表明,文中提出的算法和YOLOv5的mAP分别为95.9%和89.6%,检测速度分别达到59 FPS和67 FPS,由此可知在检测速度差别不大的前提下,文中提出的算法检测更精准。两种算法的检测结果如图5和图6所示。

由实验结果和图5、图6可知,文中的算法是优于原始YOLOv5算法。原始YOLOv5网络未引入SD-CAN模块和GMR模块,在无人机多种飞行状态下,检测无人机群成员时,会出现漏检、误检,如图6(a)、(b)出现漏检,图(c)出现误检。虽然GMR-YOLOv5算法的检测速度稍低于原始YOLOv5算法,但GMR-YOLOv5算法提高了检测精度,减少了漏检、误检的情况。

为进一步验证文中提出的算法的优越性,将该算法与其他几种经典检测算法进行对比测试,如YOLOv7,YOLOX,SSD, Faster R-CNN。这几种算法的对比结果和检测效果图如表4和图7~图9所示。表4中mAP@0.50表示在IoU=0.50时计算mAP;mAP@0.50:0.95表示在不同IoU值(0.5~0.95,步长为0.05)时平均mAP。

表 4 对比实验结果

Table 4. Comparative experimental results

Algorithms mAP@0.50 mAP@0.50:0.95 FPS GMR-YOLOv5 95.9% 70.1% 59 YOLOv5 89.6% 60.5% 67 YOLOv7 86.3% 28.9% 40 YOLOX 85.9% 28.8% 33 SSD 56.7% 23.2% 87 Faster R-CNN 58.3% 30.1% 16 由表4可知,文中提出的检测算法的mAP@0.50、mAP@0.50:0.95在对比实验中是最高的,检测速度相较于原始YOLOv5有所降低。SSD算法在检测速度上相较于其他几种算法是最快的,但是在mAP@0.50,mAP@0.50:0.95指标上表现是最差的。由图5~图9可知,YOLOv7、YOLOX、SSD算法检测无人机群成员时,容易出现漏检情况,如图7与图8(a)~(c)以及图9(b)、(c)所示的漏检情况。综合来看,文中提出的检测算法优于其他几种经典检测算法。

-

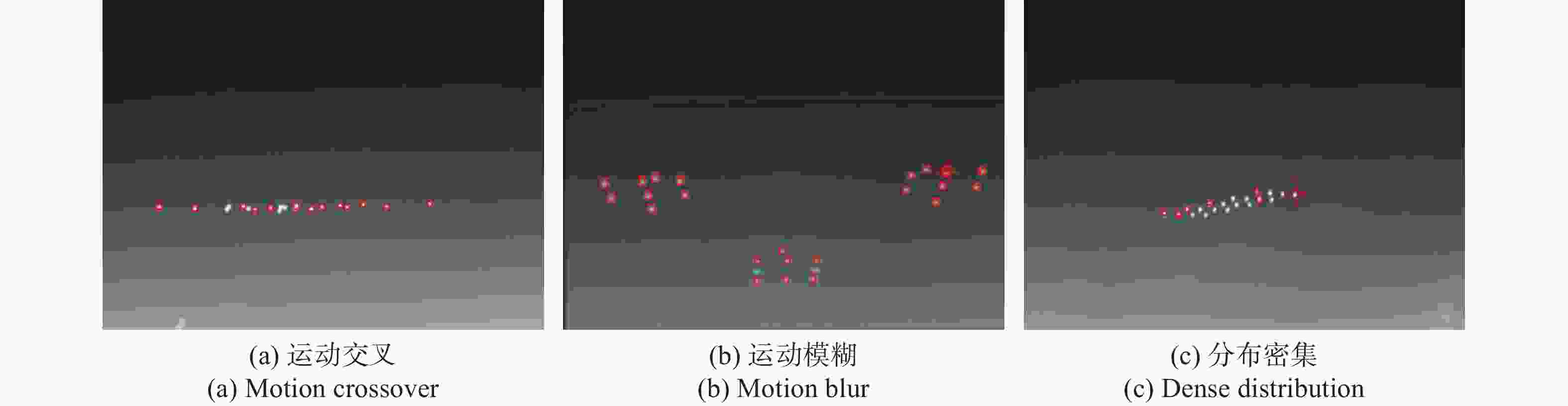

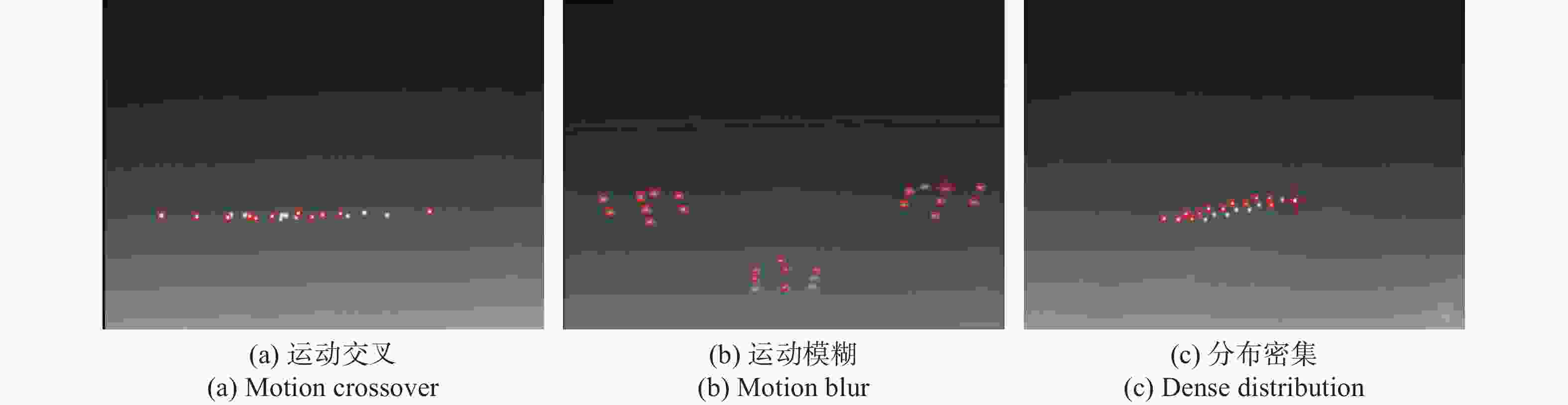

为验证GMR-YOLOv5算法的抗干扰性,本节选取Drone-swarms Dataset数据集中无人机群运动交叉、运动模糊、分布密集三种干扰情况下进行GMR-YOLOv5、YOLOv5、YOLOv7、YOLOX四种算法对比实验。三种干扰情况下的数据集分别为606、564、523张图像,数据集信息如表5所示。实验结果如表6~表8所示,检测结果如图10~图13所示。

表 5 三种干扰情况下的数据集信息

Table 5. Information about the dataset for the three disturbance scenarios

Title Norm Motion crossover 606 sheet Motion blur 564 sheet Dense distribution 523 sheet UAV target size/pixel 9×8-13×11 表 6 运动交叉情况下,对比实验结果

Table 6. Comparison of experimental results in the case of motion crossover

Algorithms mAP@0.50 mAP@0.50:0.95 FPS GMR-YOLOv5 82.2% 43.4% 60 YOLOv5 58.8% 15.1% 69 YOLOv7 69.1% 16.2% 21 YOLOX 75.9% 17.2% 30 表 7 运动模糊情况下,对比实验结果

Table 7. Comparison of experimental results in the case of motion blur

Algorithms mAP@0.50 mAP@0.50:0.95 FPS GMR-YOLOv5 91.3% 56.2% 60 YOLOv5 87.3% 26.9% 69 YOLOv7 86.1% 26% 23 YOLOX 88% 24.8% 30 表 8 分布密集情况下,对比实验结果

Table 8. Comparison of experimental results in the case of dense distribution

Algorithms mAP@0.50 mAP@0.50:0.95 FPS GMR-YOLOv5 90.9% 55.5% 60 YOLOv5 75.5% 20.8% 69 YOLOv7 83.7% 18.5% 28 YOLOX 80% 16.3% 30

图 10 三种干扰情况下,GMR-YOLOv5算法检测结果

Figure 10. Detection results of GMR-YOLOv5 algorithm in three interference cases

图 11 三种干扰情况下,YOLOv5算法检测结果

Figure 11. Detection results of YOLOv5 algorithm in three interference cases

图 12 三种干扰情况下,YOLOv7算法检测结果

Figure 12. Detection results of YOLOv7 algorithm in three interference cases

图 13 三种干扰情况下,YOLOX算法检测结果

Figure 13. Detection results of YOLOX algorithm in three interference cases

由表6~表8和图10~图13可知,在三种干扰情况下,GMR-YOLOv5算法的mAP@0.50和mAP@0.50:0.95都是最高的,原始YOLOv5算法在对比实验中,速度最快,但在mAP@0.50指标上表现较差。原始YOLOv5、YOLOv7、YOLOX算法在三种干扰情况下,容易出现漏检、误检无人机群成员的情况如图11~图13所示。综合来看,文中提出的GMR-YOLOv5算法具有较好的抗干扰能力。

-

为了表现出无人机群目标检测算法相比于原始YOLOv5的提升,对算法进行消融实验。此次实验使用Drone-swarms Dataset数据集对GMR-YOLOv5算法的每一部分改进模块开展训练验证并记录实验结果,实验结果如表9所示。

表 9 消融实验结果

Table 9. Results of ablation experiment

Algorithms mAP@0.50 mAP@0.50:0.95 FPS YOLOv5 89.6% 60.5% 67 YOLOv5+SD-CAN 93.2% 58.1% 60 YOLOv5+GMR 91.3% 60.4% 70 YOLOv5+SD-CAN+SIoU 93% 58.3% 60 YOLOv5+GMR+SIoU 92.3% 61.9% 70 YOLOv5 +SD-CAN+GMR 92.1% 57.9% 60 YOLOv5+SD-CAN+GMR+SIoU 95.9% 70.1% 59 由表9可知,YOLOv5网络中引入SD-CAN模块后,mAP@0.50提高约4%,但是mAP@0.50:0.95和检测速度有所降低。引入GMR模块后,mAP@0.50提高约1.9%,但检测速度在此次消融实验中较快的。SD-CAN模块和GMR模块都引入到YOLOv5网络后,mAP@0.50:0.95降低约4.3%,mAP@0.50提高约2.8%。文中提出的无人机群成员目标检测算法虽然检测速度降低了,但是在mAP@0.50,mAP@0.50:0.95指标上均有不同的程度的提高。

-

针对现有无人机群检测算法容易出现漏检、误检目标以及未能感知无人机群结构特性的问题,文中提出了一种基于红外探测的无人机群结构特性感知算法。首先,将空间深度转换模块和通道注意力机制结合,构建了空间深度-通道注意力模块,该模块不仅将无人机特征从空间维度转化到通道维度,还利用通道注意力机制使网络更加关注通道中无人机的特征,提高了检测网络对无人机群成员的特征提取能力;其次,利用群成员在图像中的位置、边界框大小等结构信息,提出群成员关系模块,该模块将无人机群成员之间产生联系,进而提高网络对无人机群成员的检测定位能力;同时,采用SIoU损失函数,加速网络收敛;最后,在无人机群数据集上开展实验验证,最终获得mAP为95.9%,检测速度为59 FPS的网络模型,实现了无人机群队形结构的感知。

Structure characteristics sensing method of unmanned aerial vehicle group based on infrared detection

-

摘要: 针对现有目标检测算法未考虑无人机群成员之间相互关系,容易出现漏检、误检群成员和未能感知无人机群队形结构特性的问题,提出了一种基于红外探测的无人机群结构特性感知方法。首先,为减少图像中无人机外观特征损失,设计了空间深度-通道注意力模块,该模块结合空间深度转换模块保留判别特征信息的优点和通道注意力关注通道间相关性的特点,提高了检测网络的特征提取能力;其次,为充分利用图像中无人机群成员的位置、边界框大小等结构信息,提出了群成员关系模块,将无人机的结构信息融入到无人机群成员之间的关联信息,提高了检测网络对无人机群成员的检测定位能力。最后,在自建的Drone-swarms Dataset数据集上开展实验验证。实验结果表明:文中提出的无人机群结构特性感知算法的mAP达到了95.9%,较原始YOLOv5算法的mAP提高了约7%,有效提高了无人机群成员的检测精度;同时,检测速度达到59帧/s,实现了无人机群目标的实时检测,进而实现了无人机群队形结构特性的感知。Abstract:

Objective With the rapid development of mobile self-assembling network technology, cooperative control technology, sensing and detection technology, and artificial intelligence technology, unmanned aerial vehicle (UAV) group have gradually shown the characteristics of group intelligence distributed, self-organized and non-cooperative. Timely detection of an attacking UAV group allows for a wealth of countermeasures to be taken effectively. Countermeasures such as navigation deception, physical capture and physical destruction can be taken for a small number of UAV group, but once a large number of UAVs gather to form a UAV group, it is difficult to carry out countermeasures. Therefore, the development of UAV group target detection and identification technology is a prerequisite and key to achieving anti-UAV battlefield situational awareness. The existing target detection algorithms that do not consider the interrelationship between UAV group members, are prone to miss detection, mis-detect group members and fail to sense the structural characteristics of UAV group, we propose a method to sense the structural characteristics of UAV group based on infrared detection. Methods Based on infrared detection and YOLOv5 algorithm, we propose an algorithm for sensing the structural characteristics of UAV group based on infrared detection, called GMR-YOLOv5 algorithm. The algorithm is designed by fusing the Space-to-Depth Non-strided Convolution (SPD-Conv) module with the Channel Attention Net (CAN) module to design the Space to Depth-Channel Attention Net (SD-CAN) module. The SPD-Conv module can convert the UAV features from the spatial dimension to the channel dimension, compared with the channel attention mechanism, which does not focus on the correlation between channels, and the designed SD-CAN module can realize the conversion of target features from the spatial dimension to the channel dimension, and also focus on the UAV features in the channel. Meanwhile, for the problem that the texture features of the UAV group members are not obvious in the infrared images, the Group Members relation (GMR) is constructed. This module makes full use of the structural information of UAV group members such as their positions and bounding box sizes in the infrared image, and incorporates the structural information of UAV group members into the association information between group members. Compared with the existing target detection algorithms, the proposed group membership relationship module in this paper considers the information such as the position and bounding box size of UAV group members in the image. Finally, the two constructed modules are fused to the YOLOv5 base network. The algorithm validation experiments are carried out on the self-built UAV group dataset. Results and Discussions Experimental validation was carried out on the constructed Drone-swarms Dataset (Tab.1, Fig.4), and the experimental results showed that the mAP of the GMR-YOLOv5 algorithm proposed in the paper reached 95.9%, which improved the mAP of the original YOLOv5 algorithm by about 7%, effectively improving the detection accuracy of UAV group members (Tab.4). Meanwhile, the detection speed reached 59 FPS, which achieves real-time detection of UAV group targets and perception of UAV group structure characteristics. Compared with the classical detection algorithm, the GMR-YOLOv5 algorithm reduces the cases of missed and false detection of UAV targets (Fig.5-Fig.9). Ablation experiments are also conducted to demonstrate the effectiveness of each part of the improved module. The experimental results show that the proposed algorithm in the paper, although the detection speed is reduced, it has different degrees of improvement in the indexes mAP@0.50, mAP@0.50:0.95 (Tab.5). Conclusions We propose an algorithm for sensing the structural characteristics of UAV group based on infrared detection. Firstly, the SPD-Conv module and the CAN module are combined to build a SD-CAN module, which not only converts drone features from the spatial dimension to the channel dimension, but also uses the channel attention mechanism to make the network pay more attention to the features of group in the channel, which improves the detection network's feature extraction ability for UAV group members. Secondly, using the position of group members in infrared image, boundary frame size and other structural information, the proposed GMR module, which generates connections among UAV group members, and then improves the detection and localization ability of the network for UAV group members. Meanwhile, the SIoU loss function is used to accelerate the convergence of the network. Finally, experimental validation is carried out on the UAV group dataset, and finally a network model with mAP of 95.9% and detection speed of 59 FPS is obtained to achieve UAV group structure characteristic sensing. -

Key words:

- infrared detection /

- UAV group /

- group member structure /

- channel attention /

- group member relation

-

表 1 数据集信息

Table 1. Dataset information

Title Norm Dataset name Drone-swarms Dataset Number of images 6900 sheet Resolution of an image/pixel 960×540 Number of UAV 20-25 racks UAV target size/pixel 9×8-13×11 表 2 实验平台的配置

Table 2. Configuration of the experimental platform

Title Norm Operating system Ubuntu 18.04 Processing unit Intel Xeon Gold 6230×2 Graphics board NVIDIA RTX 8000 ×2 RAM 192 G(32 G×6) DDR4 2933 MT/s Development environment Python 3.6

PyTorch 1.10.0

CUDA 11.4表 3 参数设置

Table 3. Parameter settings

Parameter name Parameter size Epoch 300 Attenuation factor 0.0005 Learning rate 0.001 Model optimizer Adam Batch size 8 表 4 对比实验结果

Table 4. Comparative experimental results

Algorithms mAP@0.50 mAP@0.50:0.95 FPS GMR-YOLOv5 95.9% 70.1% 59 YOLOv5 89.6% 60.5% 67 YOLOv7 86.3% 28.9% 40 YOLOX 85.9% 28.8% 33 SSD 56.7% 23.2% 87 Faster R-CNN 58.3% 30.1% 16 表 5 三种干扰情况下的数据集信息

Table 5. Information about the dataset for the three disturbance scenarios

Title Norm Motion crossover 606 sheet Motion blur 564 sheet Dense distribution 523 sheet UAV target size/pixel 9×8-13×11 表 6 运动交叉情况下,对比实验结果

Table 6. Comparison of experimental results in the case of motion crossover

Algorithms mAP@0.50 mAP@0.50:0.95 FPS GMR-YOLOv5 82.2% 43.4% 60 YOLOv5 58.8% 15.1% 69 YOLOv7 69.1% 16.2% 21 YOLOX 75.9% 17.2% 30 表 7 运动模糊情况下,对比实验结果

Table 7. Comparison of experimental results in the case of motion blur

Algorithms mAP@0.50 mAP@0.50:0.95 FPS GMR-YOLOv5 91.3% 56.2% 60 YOLOv5 87.3% 26.9% 69 YOLOv7 86.1% 26% 23 YOLOX 88% 24.8% 30 表 8 分布密集情况下,对比实验结果

Table 8. Comparison of experimental results in the case of dense distribution

Algorithms mAP@0.50 mAP@0.50:0.95 FPS GMR-YOLOv5 90.9% 55.5% 60 YOLOv5 75.5% 20.8% 69 YOLOv7 83.7% 18.5% 28 YOLOX 80% 16.3% 30 表 9 消融实验结果

Table 9. Results of ablation experiment

Algorithms mAP@0.50 mAP@0.50:0.95 FPS YOLOv5 89.6% 60.5% 67 YOLOv5+SD-CAN 93.2% 58.1% 60 YOLOv5+GMR 91.3% 60.4% 70 YOLOv5+SD-CAN+SIoU 93% 58.3% 60 YOLOv5+GMR+SIoU 92.3% 61.9% 70 YOLOv5 +SD-CAN+GMR 92.1% 57.9% 60 YOLOv5+SD-CAN+GMR+SIoU 95.9% 70.1% 59 -

[1] Zhao Yuemeng, Liu Huigang. Detection and tracking of low-altitude unmanned aerial vehicles based on optimized YOLOv4 algorithm [J]. Laser & Optoelectronics Progress, 2022, 59(12): 397-406. (in Chinese) doi: 10.3788/LOP202259.1215017 [2] Bao Wenqi, Xie Liqiang, Xu Caihua, et al. Real-time detection method of micro UAV based on YOLOv5 [J]. Journal of Ordnance Equipment Engineering, 2022, 43(5): 232-237. (in Chinese) doi: 10.11809/bqzbgcxb2022.05.037 [3] Wang Jiannan, Lv Shengtao, Niu Jian. Drone detection method based on improved YOLOv5 [J]. Optics & Optoelectronic Technology, 2022, 20(5): 48-56. (in Chinese) doi: 10.19519/j.cnki.1672-3392.2022.05.015 [4] Liu Shanliang, Wu Renbiao, Qu Jingyi, et al. Bi PPYOLO tiny: A lightweight airport UAV detection method [J]. Journal of Safety and Environment, 2023, 23(2): 480-488. (in Chinese) doi: 10.13637/j.issn.1009-6094.2021.1818 [5] Zhang Lingling, Wang Peng, Li Xiaoyan, et al. Low-altitude UAV detection method based on optimized SSD [J]. Computer Engineering and Applications, 2022, 58(16): 204-212. (in Chinese) doi: 10.3778/j.issn.1002-8331.2201-0067 [6] Ma Qi, Zhu Bin, Zhang Hongwei, et al. Low-altitude UAV detection and recognition method based on optimized YOLOv3 [J]. Laser & Optoelectronics Progress, 2019, 56(20): 201006. (in Chinese) doi: 10.3788/LOP56.201006 [7] Zhou Qiang, Xia Mingyun. Research on Multi-UAV detection based on improved SSD algorithm [J]. Information Technology, 2020, 44(12): 71-76. (in Chinese) doi: 10.13274/j.cnki.hdzj.2020.12.014 [8] Zhang Ruixin, Li Ning, Zhang Xiaxia, et al. Low-altitude UAV detection method based on optimized CenterNet [J]. Journal of Beijing University of Aeronautics and Astronautics, 2022, 48(11): 2335-2344. (in Chinese) doi: 10.13700/j.bh.1001-5965.2021.0108 [9] Qi Jiangxin, Wu Ling, Lu Faxing, et al. UAV cluster detection based on improved YOLOv4 algorithm [J]. Journal of Ordnance Equipment Engineering, 2022, 43(6): 210-217. (in Chinese) doi: 10.11809/bqzbgcxb2022.06.033 [10] Wang C, Meng L, Gao Q, et al. A lightweight UAV swarm detection method integrated attention mechanism [J]. Drones, 2022, 7(1): 13. doi: 10.3390/drones7010013 [11] Sunkara R, Luo T. No more strided convolutions or pooling: A new CNN building block for low-resolution images and small objects[DB/OL]. (2022-08-07) https://arxiv.org/abs/2208.03641 [12] Hu J, Shen L, Sun G. Squeeze-and-excitation networks[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2018: 7132-7141. [13] Hu H, Gu J, Zhang Z, et al. Relation networks for object detection[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2018: 3588-3597. [14] Gevorgyan Z. SIoU loss: More powerful learning for bounding box regression[DB/OL].(2022-05-25) https://arxiv.org/abs/2205.12740 [15] Lin T Y, Goyal P, Girshick R, et al. Focal loss for dense object detection[C]//Proceedings of the IEEE International Conference on Computer Vision, 2017: 2980-2988. -

下载:

下载: