-

视觉是人类获得客观世界信息的主要途径,光学成像探测技术也因此在各个领域占据重要地位。传统光学成像探测技术大多基于光强和波长所提供的信息,存在的缺陷是受光线传播环境的影响较大,并且对目标的某些物理特性难以做出正确理解。偏振作为光波的基本物理信息之一,可以提供目标自身物理特性,是光学成像探测新的信息维度[1−2]。偏振成像技术通过对所测得的目标场偏振信息加以数字化处理,可以有效减少光线传播环境的干扰因素,从而提高目标的成像质量,增强对目标特性的感知能力[3−5],对于复杂环境成像具有显著的优势。偏振成像技术也因此被广泛应用于军事伪装探测[6]、海洋观测[7]、生物成像[8−10]以及工业检测[11]等领域。

偏振信息成像本质上是对光场信息获取维度的提升,通过多维偏振信息的获取与融合处理,可以解决不同复杂环境和应用领域的成像任务。例如,当光线在散射环境中传播时,散射介质使得光线改变其原有的传播方向,形成散射光,导致光学成像质量下降。利用目标与散射信号偏振信息的差异,可以有效消除背向散射光,提升散射环境光学成像质量[12−16]。在噪声环境下,若干重要偏振参量(偏振度、偏振角等)对于噪声非常敏感,导致偏振参量信号淹没在噪声中。偏振图像去噪技术可以从噪声图像复原目标物体的偏振参量信息[17]。

依靠构建物理模型以及一定先验知识是解决复杂环境偏振成像任务的重要思想。例如,针对水下、雾霾等散射环境,研究人员在散射环境偏振成像机理和物理模型研究的基础上,通过对偏振信息解译,实现了成像效果的提升。奠基性的工作是以色列海法大学团队提出的基于正交线偏振参量物理退化模型以及相应的偏振参量图像复原方法,该方法实现了水体和雾霾散射环境光学成像质量的提升[18−19]。法国国家科学研究中心团队采用基于偏振信息物理约束的非局域联合滤波的方式,实现了高斯白噪声环境下的Stokes偏振图像和Mueller偏振图像去噪[20−21]。依靠物理模型以及一定先验知识的偏振成像方法的图像复原效果很大程度上取决于建立的物理模型是否符合真实环境成像的物理过程。然而,由于构建的物理模型往往存在模型简化和偏离实际应用场景等问题,难以应对极端复杂的成像环境。

随着计算机技术的不断发展,硬件单元处理大规模数据的性能得到提升,深度学习技术也随之得到蓬勃发展,并在光学成像领域展现出独特优势[22−23]。近年来,基于深度学习的偏振成像技术也备受研究人员关注[24−25]。深度学习具有强大的特征提取和学习能力,善于通过大量的样本训练,提取和学习信号特征,归纳输入信号和预期结果之间的映射关系[26−28],尤其对于多维且相互关联的偏振参量信号处理具有独特优势。对比物理模型以及一定先验知识的偏振成像技术,基于深度学习复杂环境的偏振成像技术以其出色的不同层次特征学习与拟合能力,绕过建立物理模型求解非线性逆问题的障碍,其成像质量客观评价核心指标获得显著提升。

文中首先介绍偏振成像的基本理论,包括偏振成像原理以及复杂环境下偏振成像问题的宏观描述;随后给出了基于深度学习偏振成像技术的基本范式,并针对散射和噪声这两种最具代表性的复杂成像环境,阐述了基于深度学习偏振成像技术的代表性研究工作以及发展情况;最后针对该技术未来可能的发展方向进行了讨论。

-

偏振成像技术使用特定偏振状态的光照明目标物体,通过检测透射或反射光的偏振状态来获取关于目标物体物理特性[29−30]。常用Stokes矢量(S)描述光的偏振状态[31],其表达式如公式(1)所示:

$$ {\boldsymbol{S}} = \left[ \begin{gathered} {S_0} \\ {S_1} \\ {S_2} \\ {S_3} \\ \end{gathered} \right]{\text{ = }}{{{S}}_0}\left[ {\begin{array}{*{20}{c}} 1 \\ {P{\cos2}\alpha {\cos2}\varepsilon } \\ {P{\sin2}\alpha {\cos2}\varepsilon } \\ {P{\sin2}\varepsilon } \end{array}} \right] $$ (1) 式中:S0表示光强;S1表示在水平方向与垂直方向线偏振信息;S2表示±45°方向的线偏振信息;S3表示圆偏振信息;P为偏振度;$ \alpha $为偏振角;$ \varepsilon $为椭偏角。因此,对目标物体该矢量进行测量可间接得到目标物体的偏振度与偏振角信息。目标偏振度及偏振角信息的求取方式如公式(2)所示。偏振度(Degree of polarization, DoP)与偏振角(Angle of polarization, AoP)是光场偏振信息高维度参量,对于理解光场的偏振特性、分析目标物体材质以及表面形貌是至关重要的。

$$ {{{DoP}}} = \frac{{\sqrt {S_1^2 + S_2^2 + S_3^2} }}{{{S_{\text{0}}}}},{AoP} = \frac{1}{2}{{\mathrm{arctan}}}\frac{{{S_2}}}{{{S_1}}} $$ (2) 偏振光矢量末端的轨迹随时间的变化为椭圆形,通过对S的测量解算偏振角以及椭偏角,可以得到光矢量轨迹形成的偏振椭圆形状。因此,S与偏振光的几何描述也存在一定的相关性。Mueller矩阵被用来描述具有不同偏振态的光波与目标物体或介质之间的相互作用情况,为$4 \times 4$大小的实数矩阵[32]。光波与目标物体作用后,${ {\boldsymbol{S}} _{{{\rm{out}}}}}$满足公式(3):

$$ { {\boldsymbol{S}} _{{{\rm{out}}}}}{{ = }}\left[ {\begin{array}{*{20}{c}} {{{{m}}_{11}}}&{{{{m}}_{12}}}&{{{{m}}_{13}}}&{{{{m}}_{14}}} \\ {{{{m}}_{21}}}&{{{{m}}_{22}}}&{{{{m}}_{23}}}&{{{{m}}_{24}}} \\ {{{{m}}_{31}}}&{{{{m}}_{32}}}&{{{{m}}_{33}}}&{{{{m}}_{34}}} \\ {{{{m}}_{41}}}&{{{{m}}_{42}}}&{{{{m}}_{43}}}&{{{{m}}_{44}}} \end{array}} \right] {\boldsymbol{S}} $$ (3) Mueller矩阵通过矩阵分解方法可得到用于表征目标物体的退偏度、相位延迟、强度衰减等自身特性[33]。图1给出了常见的偏振成像系统,系统组成具体可分为光源、偏振产生器(Polarization State Generator, PSG)、目标物体、偏振分析器(Polarization State Analyzer, PSA)以及成像探测器五个部分。PSG与PSA由偏振片和相位延迟器(如四分之一波片或半波片)共同组成。系统成像过程可以概括为:不同的偏振状态光源照射目标物体表面,经目标物体表面调制后的反射信号经PSA进行分析被成像探测器采集。S的测量方式采用被动偏振成像系统,即移除光源以及PSG,使用成像环境光作被动照明光源。测量S的线偏振信息部分需移除PSA中的波片,改变偏振片的旋转角度,重复采集多次(≥3次)。为方便进行解算,一般选取0°、45°与90°作为偏振片的旋转角度。为使得测量完备,研究人员常采用在偏振片前方添加快轴沿水平方向的四分之一波片,并将偏振片旋转至与水平方向夹角为45°,以便于解算圆偏振信息。目标物体Mueller矩阵测量采用主动偏振成像系统,经典的测量方式为双旋转模式[34-35]。该模式下,PSG与PSA均由偏振片以及波片组成,测量时固定偏振片的角度,以不同的旋转角度同时旋转两片波片。为了完善Mueller矩阵测量过程,需在不同PSG与PSA的模式情况下,成像探测器重复采集至少16次测量信号。

图 1 偏振成像系统(蓝色光学元件表示偏振片,灰色光学元件表示波片)

Figure 1. General polarization imaging system (Blue optical components represent polarizers, and gray optical components represent waveplates)

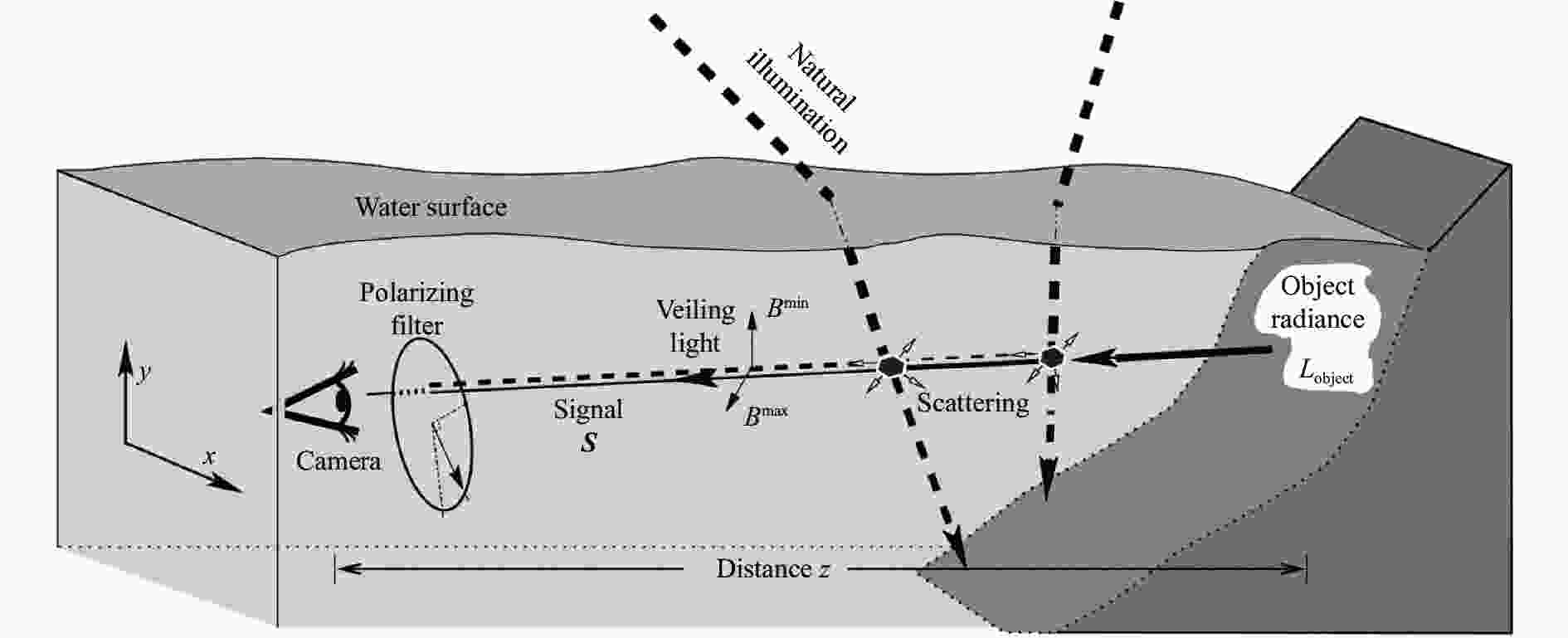

实际成像环境并非理想情况,复杂成像环境如散射环境(雾霾、水体、沙尘等)以及低照度环境(微光、晨昏等)对于偏振成像系统信息采集具有较大的影响。复杂成像环境面临着散射光造成的图像对比度降低,不同频率光波吸收系数不同产生的图像颜色失真以及噪声引起图像质量退化问题[36−39]。图2给出了复杂环境偏振图像退化机理,得到的退化图像可以表示为公式(4)所示的函数关系:

$$ I = F\left( G \right) $$ (4) 式中:G为目标物体;I为成像探测器采集得到退化的偏振参量图像;F为复杂环境下偏振信息传输函数。传输函数是偏振参量(如偏振角、偏振度、椭偏角、退偏度、相位延迟等)的函数。构建物理模型以及一定先验知识可以得到公式(4)的映射关系。通过该物理模型可以描述复杂环境偏振成像图像质量退化过程。复杂环境偏振成像复原任务为非线性逆向问题,$\widehat G$表示对传输函数逆向求解得到的偏振参量复原图像效果,求取方式由公式(5)给出:

$$ \widehat G = {F^{ - 1}}\left( I \right) $$ (5) 式中:$ {F^{ - 1}} $表示偏振信息传输函数的逆过程。已知探测器采集退化图像I,通过逆向求解构建的物理模型的未知参数并结合目标物体G的先验知识,可以得到偏振图像复原效果。

-

不同于构建物理模型以及一定的先验知识解决复杂环境偏振成像任务的思路,深度学习技术以偏振成像系统采集得到的多维偏振参量图像作为输入数据,利用网络非线性特征拟合能力,拟合公式(5)表达的映射关系,得到偏振参量图像复原结果。其本质上将复杂环境偏振成像复原这一非线性逆向问题转变为一个伪正向问题,规避了求解非线性逆向问题算法的障碍。本节内容从基本的深度神经网络(Deep Neural Networks, DNN)结构出发,通过描述技术基本范式并针对复杂环境偏振成像输入数据特点,引出卷积神经网络(Convolutional Neural Networks, CNN),分析其处理图像数据具有权值共享以及局部连接的优势,最后给出构建复杂成像环境偏振参量数据集以及偏振信息损失的思路。

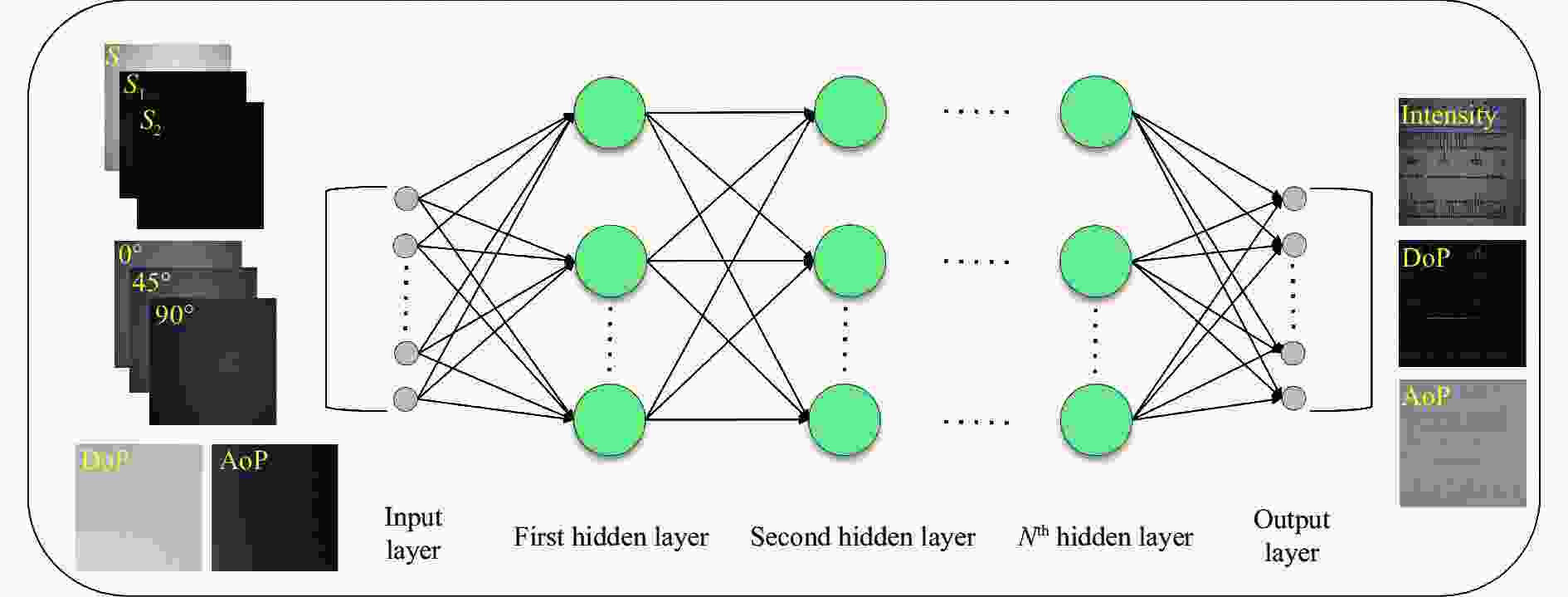

DNN的结构由图3给出,网络分为输入层、隐藏层以及输出层。其中,输入层对应n维特征,隐藏层由不同数量的神经元组成,层数N可以修改,输出层输出m维特征。网络完成特征Y→X的映射,特征维度由n维变为m维,回归任务中m与n的维度是一致的。为充分利用偏振信息的多维物理特性,网络输入数据往往为相互关联的偏振参量,为网络提供更丰富的特征,有利于复杂环境下偏振信息复原的准确性。多维偏振参量包括不同角度偏振图像、S、Mueller矩阵、偏振度以及偏振角图像。依据不同偏振成像任务需求来选择网络的输入偏振参量[40-41]。网络的输出数据往往为不同角度偏振图像、强度图像、偏振度或偏振角图像。

图 3 基于深度学习复杂环境的偏振成像技术范式图像

Figure 3. The general workflow of polarization imaging technology in complex environments based on deep learning

DNN网络可以处理多种不同的输入数据形式,而复杂环境偏振成像任务的输入数据为图像数据,其存在一定的局部相似特征,目前研究人员常采用针对图像数据提出的CNN处理。局部连接以及权值共享的设计既考虑了图像的自身特性,同时也降低了网络训练的参数规模,因此CNN可以构建深度更深、结构更为复杂的网络,如深度残差网络[42]以及密集连接网络[43],提高网络对于图像不同层次特征的提取和梯度信息流动能力。

由于缺少复杂环境偏振成像公开数据集,数据集构建主要通过实验室模拟复杂环境以及真实复杂环境实测两种方式。实验室采用偏振成像系统构建复杂环境的方式,图像筛选效率低且标注成本较高[44-45]。实验室采用仿真算法模拟复杂环境的方式适合短时间构建一定规模的配对图像数据集[46-47],但数据生成方法具有较强的关联性,无法考虑采集数据时的不确定因素。真实复杂环境实测方法由于环境因素不可控且难以采集标签图像,不适用于大规模获取需要配对的图像数据。研究人员往往使用少量真实复杂环境图像对网络测试,以证明训练网络具有真实复杂环境的处理能力[48-49]。

构建适合于多维偏振参量输入数据的损失函数对于网络训练效果至关重要,研究人员常用的损失函数包括像素级损失以及针对特征图像运算的感知损失。像素级损失仅考虑图像间每一像素点像素值的差异,常用L1与L2范数度量[50-51]。感知损失可以衡量图像特征图间的差异[52],相较于像素级损失有着更大的感受野,可以抑制图像像素值突变造成的图像伪影以及假轮廓。使用的偏振参量可为不同偏振角度的图像、S、偏振度以及偏振角。在不同偏振信息的维度使用损失函数约束,确保输出偏振参量图像在不同维度与真实偏振参量图像尽可能接近。网络训练时,根据实际成像任务可考虑采用一种或多种针对于多维偏振参量输入的损失函数。原则上来说,偏振图像去噪任务需要对DoP与AoP进行约束,偏振图像去散射任务需要对不同偏振角度的图像进行约束,两者约束方式常采用像素级损失的方式。若复原结果出现过拟合情况或过渡平滑现象,可考虑加入正则化项;若出现伪影以及假轮廓现象,可考虑感知损失进行约束。网络总损失表达形式如公式(6)所示:

$$ {{{Loss}} = }{{{w}}_1}{P_1} + {{{w}}_2}{P_2} + \cdots + \gamma R $$ (6) 公式(6)表明,网络的损失函数由子损失Pi项以及正则项R组成。不同子损失对于网络训练的重要性是不同的,需要通过子损失权重${{{w}}_{{i}}}$进行权衡。常用策略有量级一致方式,即训练一次后观察各个子损失的量级,通过赋值${{{w}}_{{i}}}$将子损失调整为同一量级进行训练。另一种为加权求和调整策略,即按照当下子损失权重所占总损失的比例,随着网络迭代过程自动调节。$ \gamma $为正则项权重,可通过一定的物理知识先验对网络梯度更新方向作以限制,防止得到的结果与任务的先验知识相悖;也可通过引入描述图像特征的信息(如梯度信息),防止得到的图像不符合人眼的视觉感知习惯,出现局部过度平滑的现象,提升恢复图像的质量。

-

以两种典型的复杂环境(散射环境及噪声环境)为例,阐述基于深度学习复杂环境的偏振成像研究进展,归纳领域的发展脉络。

-

图4以水体散射环境偏振成像过程为例,对于散射介质目标的光学成像,成像探测器采集的光信号可以分为两部分:第一部分为目标物体的反射光,这部分光信号在被探测器捕捉的传输过程中受散射粒子的吸收和散射共同作用发生衰减;另一部分光信号为经散射粒子散射后进入探测器的杂散光,称之为背向散射光。杂散光信号造成图像附加一层“雾”,图像质量退化。若为彩色成像,由于不同波长下散射介质的吸收系数不同,还将造成图像的颜色失真。散射环境的偏振成像面临着“看不清、看不远”的现实问题。

基于深度学习散射环境下的偏振成像技术旨在利用偏振光特性对散射介质采集的偏振信号进行解译,以实现对目标物体偏振信息的恢复。通常情况下,该技术输入为多维退化的偏振信息参量,利用深度学习强大的非线性拟合与特征学习能力,得到退化图像以及复原图像间的映射关系,对解决复杂散射环境(如非均匀光照、强散射等)具有一定的优势。

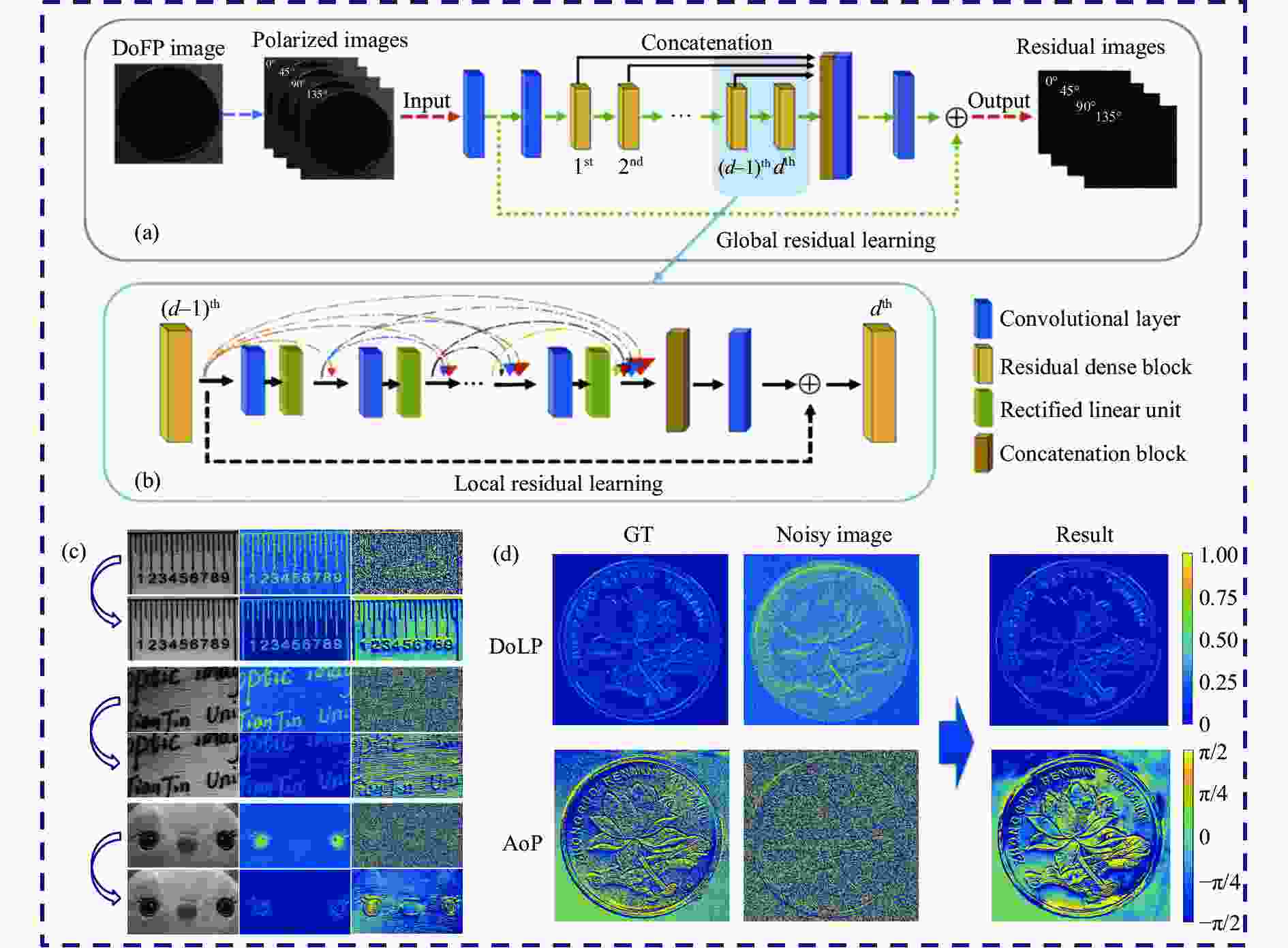

天津大学胡浩丰团队首次提出了应用于水下复杂成像环境的偏振密集网络(Polarimetric Residual Dense Network, PDN)[53],其结构如图5(a)所示,Polarimetric-Net的输入是三张不同角度偏振图像,而Intensity-Net仅输入一张强度图像。该设计用于验证采用多维度偏振信息作为输入得到的去散射效果远远优于仅输入光强图像。因此,多维度偏振信息为网络提供了不同的特征维度,对网络的复原效果有着积极的作用。网络的损失函数采用不同偏振角度图像的像素级损失。不同方法复原对比效果如图5(b)所示,复原效果在主观与客观评价指标(Edge-Model Estimation, EME)[54]均为最佳。2022年,浙江理工大学研究团队提出了应用于高浑浊度的水下强散射复杂环境图像恢复残差密集网络[55],该工作在PDN工作的基础上延拓至高浑浊度水体,在偏振信息退化更严重的成像环境下取得了不错的偏振参量图像复原效果。

2024年,西安电子科技大学刘飞团队提出了一种浑浊水下目标三维重建方法。首先使用深度学习去散射网络得到浑浊水体图像复原效果,随后利用已复原图像的偏振度与偏振角参量得到高质量的水体目标三维图像重建效果[56]。图6所示为水生动物原始采集图像以及三维重建结果。

以上研究工作均为数据驱动方式。该方式需要一定规模的数据作支撑,而构建散射环境大规模偏振参量数据集需要投入较大的人力物力资源,效率较低。部分研究工作针对数据获取难、采集效率低的现实困难,给出了针对性的解决方案。合肥工业大学研究团队利用蒙特卡洛算法[57]模拟主动偏振成像系统在散射环境采集偏振信息的退化过程。通过这种方案,研究人员生成了大量配对的散射环境和清晰目标物体的图像数据集。生成对抗网络(Generative Adversarial Networks, GAN)是一种无监督方法,训练时不需要完全配对的图像[58]。基于此循环生成对抗网络(Cycle Generative Adversarial Networks, CycleGAN)被提出用于解决图像风格迁移任务[59]。天津大学研究团队受到启发,将散射环境偏振图像去散射类比图像风格迁移任务,提出了一种使用融合多维偏振信息的GAN网络实现水下图像去散射的无监督训练方式,称为U2R-pGAN。网络结构如图7(a)所示,图像复原效如图7(b)所示[60]。该工作既解决了数据获取难效率低的问题,又证明了采用多维度偏振参量损失可以有效提升网络对于偏振图像特征的提取能力。

自监督学习是一种利用无标签数据自动构造伪标签数据以驱动模型的训练的方式[61],通过物理模型作为中间桥梁构造伪标签数据推动网络的训练过程。南京理工大学研究团队采用偏振差分物理退化模型构造标签数据以驱动网络训练[62]。香港大学研究团队提出一种自监督无需训练的偏振图像去散射网络架构[63]。利用网络输出的结果,采用陕西师范大学研究团队[64-65]提出的基于偏振角信息以及S矢量的物理模型生成偏振参量退化图像,并将生成的采集图像与输入图像计算损失。因此,采用仿真算法模拟,无监督训练方式以及自监督训练方式是解决散射环境数据采集系统复杂、采集效率低、数据人工筛选与标注成本高问题的思路。

数据驱动方式对于数据依赖性较大,将模型视作一个“黑盒子”,学习输入与标签图像间的映射关系,而训练数据不可能涵盖所有的散射环境,面对不同情况的散射环境具有局限性。同时,这种方式有可能出现与物理知识相悖的复原结果,网络参数训练量较大,效率较低。通过将物理模型以及一定先验知识内嵌网络结构中,增强网络对于偏振信息退化过程的学习推理能力,以期望适应不同情况的散射环境。由于物理模型对于网络训练过程的引导与约束作用,内嵌物理模型方式可显著提升网络学习的效率、泛化性和物理可解释性。常应用于对不同散射环境均有偏振参量图像去散射任务需求的场景。

北京大学施柏鑫研究团队提出了一种物理模型内嵌雾天去散射网络架构,用于处理雾天图像[49]。该网络由四个子网络组成,用于估计与校正物理模型的两个关键参数,如图8(a)所示,该方法为物理模型与神经网络的融合思路提供了新的视角。图8(b)为清晰图像得到雾天图像的合成过程,图8(c)给出了一组在真实环境下拍摄的远景退化图像经网络测试后的复原效果。通过其局部放大图像可以看出,提出的方法在远处高楼的细节部分去散射效果较好,但仔细观察图像仍存在部分散射信号残留,去散射效果并不彻底。可能的原因是该方法采用深度图像对雾天图像进行合成来构建训练数据集。该工作证明了采用内嵌物理模型方式训练的网络具备一定的泛化能力。天津大学研究团队提出了一种物理模型内嵌水下环境去散射网络,称之为PUM2-Net模型[66],其将大气退化物理模型中的两个关键参数整合为一个关键参数,从而降低网络学习参数的自由度,使得网络更容易收敛。2024年,合肥工业大学研究团队提出了一种先验知识引导的动态偏振信息融合网络框架[67],该框架能够动态地调整分配给不同偏振信息分量的权重,从而有效地适应不同散射环境。该网络框架包括三个分支网络,分别为输入S0、S1和线偏振度多维度偏振信息图像。光强图像的频谱信息被用作门控网络的输入,用于确定三个分支偏振参量网络的融合权重。

总体而言,研究人员使用两种思路解决了基于深度学习的散射环境偏振成像任务,即数据驱动方式和物理模型内嵌的方式。在该技术首次被提出后,研究人员使用无监督、自监督的学习方式以及通过仿真合成所需要的图像数据来解决基于深度学习偏振成像去散射技术面临的公开数据集缺失、采集一定规模数据困难的现实困难。物理模型内嵌方式相关研究目前尚处于起步阶段,研究人员期望通过内嵌物理模型或先验知识引导的方式提高网络对于不同复杂环境的泛化能力。目前的研究表明,从偏振参量图像复原效果来看,内嵌物理模型方式偏振参量图像与数据驱动方式相比没有体现出特别明显的优势,究其原因可能是物理退化模型没有考虑前向散射光以及信号的吸收衰减,对于散射介质偏振参量退化过程不能完全描述。

-

由于偏振信息对噪声极为敏感,微光条件极低信噪比的环境获取高质量的偏振参量图像相当具有挑战性。光场受到噪声的影响,传播不再遵循简单的线性传播规律,而呈现出复杂的非线性特征。这种非线性特征导致偏振参量图像解译具有不稳定性,使其容易受到噪声环境的影响,进而增加了在噪声环境获取目标准确偏振信息的难度。图9给出了一组真实情况微光条件采集的图像。同等噪声水平下,非线性算法解译的偏振参量图像相比线性算法求得的S图像质量退化更为严重,其中偏振角图像尤为明显,目标信息几乎完全不可见。因此,解决噪声引起的偏振参量图像退化问题是偏振成像技术应用于低照度复杂环境的关键。偏振参量图像去噪经典方法往往利用图像稀疏性以及局部像素间的隐式关系,有K-SVD[68]、非局部稀疏表示算法[69]以及三维块匹配(Block-Matching and 3D filtering, BM3D)[70]等方法。它们通过数字图像处理的方式来提升噪声环境下的偏振成像质量,算法对不同的噪声环境具有一定的普适性。但算法复原效果往往不够理想,特别是在高噪声环境对目标偏振极弱信号的解译。深度学习技术强大的特征提取和学习能力,使得极低信噪比条件下的高质量偏振成像成为了可能。

图 9 计算偏振参量时噪声传递情况(红色矩形表示放大的区域)

Figure 9. Noise transfer when calculating polarization parameters (The red rectangle indicates the enlarged area)

天津大学胡浩丰团队在2020年首次提出了基于密集残差网络的偏振图像去噪网络,称之为PDRDN模型[71]。图10(a)所示为该网络的结构细节,网络整体结构由三部分组成,分别为浅层特征提取模块、残差密集模块(Residual Dense Block, RDB)以及密集特征融合模块。网络的输入是将偏振探测器得到的原始图像经过拆分而成的不同角度偏振图像,输出为噪声与不含噪声图像之间的残差图像,网络损失函数针对不同偏振角度含噪声的残差图像。利用局部残差学习,将密集连接层与局部特征融合手段相结合,如图10(b)所示。第d−1个RDB的输出直接连接到第d个RDB中的每一层,并构成第d+1个RDB的输入。局部特征融合可以进一步增强信息与梯度的流动性,提高网络对于不同层次特征的学习表达能力。图10(c)展示了常见材质的测试效果,包括塑料、木材和织物材料,以验证提出方法具有一定泛化性能。图10(d)所示为极低信噪比环境下的高质量偏振参量复原效果与真值图像对比示意图。康涅狄格大学研究团队提出了面向偏振三维集成成像的低照度复杂环境卷积神经网络模型,称之为DnCNN模型。该模型提高了低光照和部分遮挡退化环境中偏振成像任务重建图像质量[72]。集成成像场景的3D图像是通过记录多个视角的2D图像,经算法或光学重建场景来获得的。网络的输入信息为采用0均值高斯噪声模型模拟生成的数据集,输出为与文献[71]和[73]类似的噪声残差图像。该方法使用部分遮挡低照度环境采集的真实偏振图像测试并与全变分去噪模型(Total Variation, TV)的性能进行比较,实验结果如图11所示,在低信噪比部分遮挡环境下,该模型表现最优。文献[74]将黑白偏振图像延拓至多维度彩色偏振图像,提出光强-偏振低照度复原任务网络结构。由于光强信息与偏振信息对于噪声有着不同的响应,该结构由负责复原光强信息与复原偏振信息的子网络构成,两个子网络为串联关系,在户外场景静态目标以及动态目标测试均具有良好的去噪效果。

以上方法为数据驱动方式,偏振成像去噪任务同样面临着数据集采集效率低、人工标注成本高等问题,为此,研究人员尝试通过调整网络的训练方式进行解决。迁移学习本质上是将源域知识迁移到目标域,使用已公开的高质量数据作为源域,训练网络形成预训练模型,再将少量的采集数据作为目标域输入预训练模型,训练得到微调后的网络参数[75]。文献[76]通过微调在大规模彩色图像数据集预训练的去噪模型,使用小规模偏振数据集来执行迁移学习。通过设计基于小规模数据集的迁移学习网络,实现了与大规模数据集几乎相同的去噪性能,且偏振参量恢复效果较好。文献[77]基于CycleGAN网络[59]提出了一种无监督训练方法,网络采用偏振度以及偏振角损失函数。实验结果表明,该网络能够有效抑制室内外不同环境下偏振度以及偏振角图像的噪声,得到较好的图像恢复效果。

有监督训练存在需求大量标签数据、标注成本高昂以及模型泛化能力受限等缺点。无监督训练则转向了对无标签数据的利用,使模型能够发现数据中的潜在结构和模式,从而更全面地理解数据特征。英伟达公司研究团队于2018年提出了Noise2Noise方法,该方法从数学原理推导证明了每个场景使用两个相互独立满足零均值噪声图像训练网络相当于噪声以及不含噪声配对图像进行有监督训练的效果[78]。天津大学研究团队受到该方法启发,面向偏振图像去噪任务提出了一种无监督的网络结构,图12(a)、(b)所示为Pol2Pol方法的流程图[79],该方法根据S矢量间的关系构建偏振生成器模块,该模块可以产生一张与原偏振通道图像独立的噪声图像。将四个不同角度的偏振含噪声图像输入到四个子网络中,不同材质目标物体的偏振参量复原结果与有监督训练方式Pol2GT相比,在主观视觉与客观评价指标方面均相近,如图12(c)所示。

除上述方法外,近年来,研究者们从网络结构的角度,如注意力机制的引入、3D卷积的应用等方面提出了创新性的解决方案。通过充分利用注意力机制筛选特性以及3D卷积在三维空间提取多维度信息(偏振/颜色/空间)的能力,提高网络特征表达能力,取得了较为优异的去噪效果。文献[80]提出了一种基于通道注意力机制的残差密集网络,重点研究通道注意力对于特征图像的筛选机制,使网络去噪过程更加明确。特征图可视化效果直观地表明,注意力机制倾向于增强含有目标物体偏振参量的特征图像(如细节以及边缘),抑制含有噪声的偏振参量图像。该方法的复原效果与经典方法的处理结果如图13所示,如BM3D[70]和PDRDN[71]方法。结果表明,这两种方法虽然改善了细节信息,但是复原效果图像部分像素值存在一定的偏差。文献[81]提出了一种三维卷积神经网络用于彩色偏振图像的去噪方法,利用三维卷积从空间、颜色、偏振等多个维度提取信息以充分利用偏振参量间的相关性。训练得到的特征图像可视化结果也验证了偏振参量在网络训练过程中仍保持相关性。

基于深度学习噪声环境的偏振成像技术经历了三个发展阶段。第一阶段采用有监督端到端的训练方式,实现了一定的偏振参量图像去噪效果,但面临真实成像数据采集与标注成本高的困难;第二阶段探索采用无监督、自监督、迁移学习以及仿真模拟噪声产生过程解决第一阶段遇到的数据集构建问题,实现了小样本数据量或非配对数据训练也可以达到良好的偏振参量图像去噪效果;第三阶段,研究人员对网络训练的可解释性作以探究,在网络学习过程中尝试从特征图角度解释网络去噪过程以及偏振信息参量相互关系保持的底层原理。总体而言,基于深度学习噪声环境的偏振成像技术本质上是将不同偏振角度的图像作为先验信息,不同偏振角度的图像噪声并不明显,网络通过学习不同偏振角度图像的隐式关联特性引导网络训练,实现退化严重的高维度偏振参量偏振度与偏振角图像显著地去噪效果。

-

前文概述了基于深度学习的去散射与噪声两类典型复杂环境偏振成像技术的研究进展以及发展脉络。为了更好地展示研究工作之间的联系与区别,梳理领域发展过程,方便读者查阅,将其总结为表1。表1中给出了代表性工作的任务类型、训练方式以及工作特点。基于深度学习复杂环境的偏振成像技术早期为有监督训练方式,由于真实环境数据采集较为困难,研究人员探索采用无监督、自监督、迁移学习以及仿真算法模拟的思路。随后,研究人员对结合先验知识与物理模型的网络作以探索,形成物理模型内嵌或先验知识引导的训练方式,同时尝试从特征图角度解释网络复原效果以及偏振信息参量相互关系保持的底层原理。总的来说,这些代表性的工作在解决大规模数据集构建困难、增强网络的泛化性能以及探究网络的可解释性方面做了一定的贡献。

表 1 基于深度学习复杂环境的偏振成像代表性工作汇总

Table 1. Summary of representative work on deep learning polarization imaging in the complex environments

Task Reference Training method Characteristics of representative work Descattering [53] Supervised/data-driven Proposing for the first time using residual dense network to achieve underwater polarization images descattering [55] Supervised/data-driven Descattering with high turbidity water [56] Supervised/data-driven Achieving high-precision three-dimensional imaging of targets in the turbid water [57] Supervised/data-driven Using Monte Carlo simulation algorithm to simulate the polarization information degradation process [60] Unsupervised/data-driven Training does not require paired datasets [62] Self-supervised/data-driven Using physical degradation model to generate pseudo-label data to drive network training [63] Self-supervised/data-driven Using the Stokes-based descattering model to replace the network backpropagation process [49] Supervised/physical model embedded Embedding physical degradation model in the network and the key parameters of the model are fitted [66] Supervised/physical model embedded Integrating physical model parameters to reduce the degree of freedom of network fitting [67] Supervised/prior knowledge guidance Using the spectral information of the light intensity image to input the gating network to assign sub-network weights Denoising [71] Supervised/data-driven Proposing for the first time low-light environment polarization images denoising [72] Supervised/data-driven Proposing three-dimensional polarization images denoising with low illumination and partial occlusion [74] Supervised/data-driven Denoising with color polarization images [76] Supervised/data-driven Using transfer learning method to achieve small sample data polarization images denoising [77] Unsupervised/data-driven Training does not require paired datasets [79] Self-supervised/data-driven Using the polarization generator to implement self-supervised training to achieve the polarization images denoising effect of supervised training [80] Supervised/data-driven Exploring the underlying principles of polarization images denoising from the perspective of feature map screening by attention mechanism [81] Supervised/data-driven Analyzing the reasons for maintaining the relationship between polarization information during network training from the perspective of feature maps -

随着深度学习技术的迅速发展,复杂环境偏振成像技术取得了引人瞩目的研究进展。文中介绍了偏振成像的基本原理,并深入探讨了基于深度学习复杂环境的偏振成像技术范式。另外,对散射和噪声这两类最具代表性的复杂环境下深度学习偏振成像技术的研究进展进行综述,总结了该领域基于深度学习策略的最新研究成果。现有研究工作表明,由于偏振信息包含多个参量且具有一定的相关性,这种多维、相互关联的信号处理问题正适合使用深度学习技术。将深度学习技术与偏振成像技术相结合,可实现光学成像质量的进一步提升,满足复杂环境的成像需求,体现更为突出的优势。

然而,诸多挑战仍在阻碍其领域进一步发展,例如模型泛化能力与可解释性不足。采用某一特定复杂环境数据集训练的模型在面对极端或未曾见过的场景时,其泛化性能受到限制,方法应用于其他散射环境受限。部分研究工作尝试利用网络内嵌物理模型提升不同复杂环境场景的泛化能力。由于物理模型不能完全描述实际成像偏振信息退化过程,偏振参量图像复原效果并未达到预想的复原效果。复杂环境光学成像机理异常复杂,多次散射、光照变化等因素使得深度学习模型在处理这些复杂场景时面临着细节损失、图像失真的问题,需要更加精细和鲁棒的算法设计,以确保深度学习在复杂环境下偏振成像应用的可靠性和有效性。因此,需要全面考虑影响偏振参量图像退化的物理过程,思考如何将未简化的物理模型内嵌网络。

目前网络可解释性仍然不足,未来应搭上深度学习技术发展的快车,与深度学习技术协同发展。从数学原理以及图像特征图等底层出发,逐步认清基于深度学习复杂环境偏振成像任务的底层原理。

多模态融合策略是未来该领域发展的其中一个方向。多模态融合策略将不同类型或来源的信息进行融合,达到不同模态异质性信息互补,提高系统的整体性能的目标。如水体复杂环境去散射任务,将声纳采集获得的声学图像与光学探测器采集得到的光学图像采用深度学习方式进行融合处理,有可能突破目前已报道的去散射效果,但目前声光融合方案面临着异质性数据标定困难等问题。

传统的偏振成像技术在散射、噪声等复杂成像环境条件下面临着一系列挑战。通过在偏振参量图像复原、偏振光学参量估计以及端到端的特征学习等方面应用深度学习技术,已经在一定程度上提升了偏振图像的成像质量,更加精准地还原了目标的特性,为克服传统偏振图像信息处理技术的局限性提供了有力的解决方案。未来仍需要进一步完善内嵌物理模型增强网络的泛化能力,提高网络的可解释性,探索多模态融合策略,进一步巩固深度学习模型在复杂环境偏振成像中的可行性,增强模型对复杂环境变化的适应能力,使其更具有场景通用性。

Research progress on polarimetric imaging technology in complex environments based on deep learning (invited)

-

摘要: 偏振成像技术通过偏振信息的获取和解译,可以有效抑制复杂环境干扰,提升成像质量,增强目标感知能力,对于复杂环境下的光学成像探测具有独特优势。然而,在散射、低照度等复杂环境下,偏振图像退化机理呈现非线性特征,偏振信息解译方法复杂度高。深度学习方法具有强大的特征提取和学习能力,通过学习大规模数据隐藏的映射规律获得偏振信息的复原效果,特别适合偏振成像这种多维度、相互关联的复杂信号处理问题。文中基于偏振成像的基本原理以及复杂环境偏振成像技术的范式,针对散射和噪声这两类典型的成像环境,介绍了深度学习偏振成像技术的研究进展,同时阐述了深度学习赋能复杂环境偏振成像任务的优势,最后对该领域的未来发展方向作以展望。Abstract:

Significance Polarization information, as one of the fundamental physical characteristics of light waves, can provide information about the intrinsic properties of the target. Polarimetric imaging technology digitizes the polarization information of the measured target field through digital processing. This approach effectively reduces the interference from the light propagation environment, thereby improving the imaging quality of the target and enhancing perception of its characteristics. In complex environments, polarimetric imaging has significant advantages. However, in complex environments such as scattering and low illumination, the degradation mechanism of polarized images exhibits nonlinear characteristics, leading to high complexity in polarized information interpretation methods. Deep learning methods possess powerful feature extraction and learning capabilities, enabling the recovery of polarized information by learning the mapping rules hidden in the large-scale collected data. This approach is particularly suitable for complex signal processing problems like polarimetric imaging, which involves multiple dimensions and interrelated signals. Progress First, the basic theory of the polarimetric imaging is introduced, including the principles of polarimetric imaging and a macroscopic description of polarimetric imaging issues in complex environments. Next, the general workflow of deep learning polarization imaging technology in complex environments is introduced. Based on deep learning, polarimetric imaging technology in complex environments uses the multi-dimensional polarimetric parameters collected by the polarimetric imaging system as input data. It leverages the nonlinear feature-fitting capabilities of neural networks to obtain image restoration results. Essentially, this approach transforms the nonlinear inverse problem of polarimetric imaging restoration in complex environments into a pseudo-forward problem, avoiding the challenges associated with solving nonlinear inverse problem algorithms. The representative developments of research in deep learning polarimetric imaging technology in response to scattering and noise, two of the most representative complex imaging environments, have been elaborated. From the inception of research in this field, the developmental trajectory of the field has been systematically outlined. In the early stages, polarimetric imaging technology in complex environments based on deep learning primarily relied on supervised training. Due to the challenges in collecting real-world data, researchers explored solutions using unsupervised, self-supervised, transfer learning, and simulation algorithms. Researchers also delved into the incorporation of prior knowledge and physical models into networks, leading to training approaches embedded with physical models or guided by prior knowledge. Overall, these representative works have made significant contributions to addressing the difficulties in constructing large-scale datasets, enhancing the generalization performance of networks, and exploring the interpretability of the networks. To better illustrate the connections and distinctions among research works, and to streamline the developmental process in this field for reader convenience, a summary has been compiled in the form of a table. The table provides task types, training methods, and characteristics of representative works for easy reference. Conclusions and Prospects With the rapid development of deep learning, polarimetric imaging technology in complex environments has achieved remarkable research progress. Existing studies indicate that, due to the multiple parameters and inherent correlations in polarized information, this multi-dimensional and interrelated signal processing problem is well-suited for the application of deep learning. The combination of deep learning and polarimetric imaging technology enables further improvement in optical imaging quality, meeting the imaging demands of complex environments and demonstrating more prominent advantages. The generalization ability, interpretability, and parameter lightweighting of deep learning technology remain areas that require further in-depth research. There is a continued need for refinement in multimodal fusion strategies, exploration of the underlying principles of network polarimetric parameter image restoration, and the design of network structures tailored for polarized multidimensional data to enhance real-time performance. Further efforts are essential to consolidate the feasibility of deep learning models in polarimetric imaging within complex environments, to enhance the adaptability of models to changes in complex environmental conditions, and to make them more universally applicable across different scenarios. -

Key words:

- polarization imaging /

- deep learning /

- image descattering /

- image denoising

-

表 1 基于深度学习复杂环境的偏振成像代表性工作汇总

Table 1. Summary of representative work on deep learning polarization imaging in the complex environments

Task Reference Training method Characteristics of representative work Descattering [53] Supervised/data-driven Proposing for the first time using residual dense network to achieve underwater polarization images descattering [55] Supervised/data-driven Descattering with high turbidity water [56] Supervised/data-driven Achieving high-precision three-dimensional imaging of targets in the turbid water [57] Supervised/data-driven Using Monte Carlo simulation algorithm to simulate the polarization information degradation process [60] Unsupervised/data-driven Training does not require paired datasets [62] Self-supervised/data-driven Using physical degradation model to generate pseudo-label data to drive network training [63] Self-supervised/data-driven Using the Stokes-based descattering model to replace the network backpropagation process [49] Supervised/physical model embedded Embedding physical degradation model in the network and the key parameters of the model are fitted [66] Supervised/physical model embedded Integrating physical model parameters to reduce the degree of freedom of network fitting [67] Supervised/prior knowledge guidance Using the spectral information of the light intensity image to input the gating network to assign sub-network weights Denoising [71] Supervised/data-driven Proposing for the first time low-light environment polarization images denoising [72] Supervised/data-driven Proposing three-dimensional polarization images denoising with low illumination and partial occlusion [74] Supervised/data-driven Denoising with color polarization images [76] Supervised/data-driven Using transfer learning method to achieve small sample data polarization images denoising [77] Unsupervised/data-driven Training does not require paired datasets [79] Self-supervised/data-driven Using the polarization generator to implement self-supervised training to achieve the polarization images denoising effect of supervised training [80] Supervised/data-driven Exploring the underlying principles of polarization images denoising from the perspective of feature map screening by attention mechanism [81] Supervised/data-driven Analyzing the reasons for maintaining the relationship between polarization information during network training from the perspective of feature maps -

[1] Goldstein D H. Polarized Light[M]. Boca Raton: CRC Press, 2017. [2] Liu X, Zhang L, Zhai X, et al. Polarization lidar: Principles and applications[C]//Photonics. MDPI, 2023, 10(10): 1118. [3] Li X, Yan L, Qi P, et al. Polarimetric imaging via deep learning: A review [J]. Remote Sensing, 2023, 15(6): 1540. doi: 10.3390/rs15061540 [4] Breugnot S, Clemenceau P. Modeling and performances of a polarization active imager at λ= 806 nm [J]. Optical Engineering, 2000, 39(10): 2681-2688. doi: 10.1117/1.1286140 [5] Marino A, Dierking W, Wesche C. A depolarization ratio anomaly detector to identify icebergs in sea ice using dual-polarization SAR images [J]. IEEE Transactions on Geoscience and Remote Sensing, 2016, 54(9): 5602-5615. doi: 10.1109/TGRS.2016.2569450 [6] Dong Y, Wan J, Wang X, et al. A polarization-imaging-based machine learning framework for quantitative pathological diagnosis of cervical precancerous lesions [J]. IEEE Transactions on Medical Imaging, 2021, 40(12): 3728-3738. doi: 10.1109/TMI.2021.3097200 [7] Ramella-Roman J C, Novikova T. Polarized Light in Biomedical Imaging and Sensing: Clinical and Preclinical Applications[M]. Berlin: Springer, 2022. [8] Novikova T, Ramella-Roman J C. Is a complete Mueller matrix necessary in biomedical imaging? [J]. Optics Letters, 2022, 47(21): 5549-5552. doi: 10.1364/OL.471239 [9] Sun M, He H, Zeng N, et al. Characterizing the microstructures of biological tissues using Mueller matrix and transformed polarization parameters [J]. Biomedical Optics Express, 2014, 5(12): 4223-4234. doi: 10.1364/BOE.5.004223 [10] Liu F, Wei Y, Han P, et al. Polarization-based exploration for clear underwater vision in natural illumination [J]. Optics Express, 2019, 27(3): 3629-3641. doi: 10.1364/OE.27.003629 [11] Li X, Hu H, Zhao L, et al. Polarimetric image recovery method combining histogram stretching for underwater imaging [J]. Scientific Reports, 2018, 8(1): 12430. doi: 10.1038/s41598-018-30566-8 [12] Hu H, Qi P, Li X, et al. Underwater imaging enhancement based on a polarization filter and histogram attenuation prior [J]. Journal of Physics D: Applied Physics, 2021, 54(17): 175102. doi: 10.1088/1361-6463/abdc93 [13] He C, He H, Chang J, et al. biomedical and clinical applications: a review [J]. Light: Science & Applications, 2021, 10(1): 194. [14] Zuo Chao, Chen Qian. Computational optical imaging: An overview [J]. Infrared and Laser Engineering, 2022, 51(2): 20220110. (in Chinese) doi: 10.3788/IRLA20220110 [15] Han P, Liu F, Wei Y, et al. Optical correlation assists to enhance underwater polarization imaging performance [J]. Optics and Lasers in Engineering, 2020, 134: 106256. doi: 10.1016/j.optlaseng.2020.106256 [16] Li X, Xu J, Zhang L, et al. Underwater image restoration via Stokes decomposition [J]. Optics Letters, 2022, 47(11): 2854-2857. doi: 10.1364/OL.457964 [17] Shen X, Carnicer A, Javidi B. Three-dimensional polarimetric integral imaging under low illumination conditions [J]. Optics Letters, 2019, 44(13): 3230-3233. doi: 10.1364/OL.44.003230 [18] Schechner Y Y, Narasimhan S G, Nayar S K. Polarization-based vision through haze [J]. Applied Optics, 2003, 42(3): 511-525. doi: 10.1364/AO.42.000511 [19] Schechner Y Y, Karpel N. Recovery of underwater visibility and structure by polarization analysis [J]. IEEE Journal of oceanic engineering, 2005, 30(3): 570-587. doi: 10.1109/JOE.2005.850871 [20] Faisan S, Heinrich C, Rousseau F, et al. Joint filtering estimation of Stokes vector images based on a nonlocal means approach [J]. JOSA A, 2012, 29(9): 2028-2037. doi: 10.1364/JOSAA.29.002028 [21] Faisan S, Heinrich C, Sfikas G, et al. Estimation of Mueller matrices using non-local means filtering [J]. Optics Express, 2013, 21(4): 4424-4438. doi: 10.1364/OE.21.004424 [22] Zuo C, Qian J, Feng S, et al. Deep learning in optical metrology: a review [J]. Light: Science & Applications, 2022, 11(1): 39. [23] Barbastathis G, Ozcan A, Situ G. On the use of deep learning for computational imaging [J]. Optica, 2019, 6(8): 921-943. doi: 10.1364/OPTICA.6.000921 [24] Luo Haibo, Zhang Junchao, Gai Xingqin, et al. Development status and prospects of polarization imaging technology ( Invited) [J]. Infrared and Laser Engineering, 2022, 51(1): 20210987. (in Chinese) [25] Liu Fei, Sun Shaojie, Han Pingli, et al. Clear underwater vision in non-uniform scattering field by low-rank-and-sparse-decomposition-based polarization imaging [J]. Acta Phys Sin, 2021, 70(16): 164201. (in Chinese) doi: 10.7498/aps.70.20210314 [26] LeCun Y, Bengio Y, Hinton G. Deep learning [J]. Nature, 2015, 521(7553): 436-444. doi: 10.1038/nature14539 [27] Chai J, Zeng H, Li A, et al. Deep learning in computer vision: A critical review of emerging techniques and application scenarios [J]. Machine Learning with Applications, 2021, 6: 100134. doi: 10.1016/j.mlwa.2021.100134 [28] Lauriola I, Lavelli A, Aiolli F. An introduction to deep learning in natural language processing: Models, techniques, and tools [J]. Neurocomputing, 2022, 470: 443-456. doi: 10.1016/j.neucom.2021.05.103 [29] Li X, Han Y, Wang H, et al. Polarimetric imaging through scattering media: A review [J]. Frontiers in Physics, 2022, 10: 815296. doi: 10.3389/fphy.2022.815296 [30] Li Zhiyuan, Zhai Aiping, Ji Yingze, et al. Research, application and progress of optical polarization imaging technology [J]. Infrared and Laser Engineering, 2023, 52(9): 20220808. (in Chinese) doi: 10.3788/IRLA20220808 [31] Kliger D S, Lewis J W. Polarized Light in Optics and Spectroscopy[M]. Amsterdam: Elsevier, 2012. [32] Wang Xia, Zhang Mingyang, Chen Zhenyue, et al. Overview on system structure of active polarization imaging [J]. Infrared and Laser Engineering, 2013, 42(8): 2244-2251. (in Chinese) [33] Lu S Y, Chipman R A. Interpretation of Mueller matrices based on polar decomposition [J]. JOSA A, 1996, 13(5): 1106-1113. doi: 10.1364/JOSAA.13.001106 [34] Sheng S, Chen X, Chen C, et al. Eigenvalue calibration method for dual rotating-compensator Mueller matrix polarimetry [J]. Optics Letters, 2021, 46(18): 4618-4621. doi: 10.1364/OL.437542 [35] Smith M H. Optimization of a dual-rotating-retarder Mueller matrix polarimeter [J]. Applied Optics, 2002, 41(13): 2488-2493. doi: 10.1364/AO.41.002488 [36] Liu F, Han P, Wei Y, et al. Deeply seeing through highly turbid water by active polarization imaging [J]. Optics Letters, 2018, 43(20): 4903-4906. doi: 10.1364/OL.43.004903 [37] Hu H, Huang Y, Li X, et al. UCRNet: Underwater color image restoration via a polarization-guided convolutional neural network [J]. Frontiers in Marine Science, 2022, 9: 1031549. doi: 10.3389/fmars.2022.1031549 [38] Li Z, Jiang H, Cao M, et al. Polarized color image denoising [C]//2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR). IEEE, 2023: 9873-9882. [39] Xu X, Wan M, Ge J, et al. ColorPolarNet: Residual dense network-based chromatic intensity-polarization imaging in low-light environment [J]. IEEE Transactions on Instrumentation and Measurement, 2022, 71: 1-10. [40] Ding X, Wang Y, Fu X. Multi-polarization fusion generative adversarial networks for clear underwater imaging [J]. Optics and Lasers in Engineering, 2022, 152: 106971. doi: 10.1016/j.optlaseng.2022.106971 [41] Lin B, Fan X, Guo Z. Self-attention module in a multi-scale improved U-net (SAM-MIU-net) motivating high-performance polarization scattering imaging [J]. Optics Express, 2023, 31(2): 3046-3058. doi: 10.1364/OE.479636 [42] He K, Zhang X, Ren S, et al. Deep residual learning for image recognition[C]//Proceedings of the IEEE conference on computer vision and pattern recognition, 2016: 770-778. [43] Huang G, Liu Z, Van Der Maaten L, et al. Densely connected convolutional networks[C]//Proceedings of the IEEE conference on computer vision and pattern recognition, 2017: 4700-4708. [44] Song Junhong, Xiao Zuojiang, Li Yingchao, et al. Influence of concentration variation of oil mist particles on scattering mueller matrix [J]. Acta Optica Sinica, 2021, 41(23): 2301001. (in Chinese) [45] Su Lewei, Duan Cunli, Sun Liang et al. Influence of optical polarization on underwater range-gated imaging for target recognition distance under different water quality conditions [J]. Infrared and Laser Engineering, 2024, 53(1): 20230372. (in Chinese) [46] Ramella-Roman J C, Prahl S A, Jacques S L. Three Monte Carlo programs of polarized light transport into scattering media: part I [J]. Optics Express, 2005, 13(12): 4420-4438. doi: 10.1364/OPEX.13.004420 [47] Wang X, Hu T, Li D, et al. Performances of polarization-retrieve imaging in stratified dispersion media [J]. Remote Sensing, 2020, 12(18): 2895. doi: 10.3390/rs12182895 [48] Chen C, Chen Q, Xu J, et al. Learning to see in the dark[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2018: 3291-3300. [49] Zhou C, Teng M, Han Y, et al. Learning to dehaze with polarization [J]. Advances in Neural Information Processing Systems, 2021, 34: 11487-11500. [50] Zhao H, Gallo O, Frosio I, et al. Loss functions for image restoration with neural networks [J]. IEEE Transactions on Computational Imaging, 2016, 3(1): 47-57. [51] Guo Enlai, Shi Yingjie, Zhu Shuo, et al. Scattering imaging with deep learning: Physical and data joint modeling optimization ( invited) [J]. Infrared and Laser Engineering, 2022, 51(8): 20220563. (in Chinese) doi: 10.3788/IRLA20220563 [52] Johnson J, Alahi A, Fei-Fei L. Perceptual losses for real-time style transfer and super-resolution [C]//Computer Vision–ECCV 2016: 14th European Conference, 2016: 694-711. [53] Hu H, Zhang Y, Li X, et al. Polarimetric underwater image recovery via deep learning [J]. Optics and Lasers in Engineering, 2020, 133: 106152. doi: 10.1016/j.optlaseng.2020.106152 [54] Agaian S S, Panetta K, Grigoryan A M. A new measure of image enhancement [C]//IASTED International Conference on Signal Processing & Communication, 2000: 19-22. [55] Xiang Y, Yang X, Ren Q, et al. Underwater polarization imaging recovery based on polarimetric residual dense network [J]. IEEE Photonics Journal, 2022, 14(6): 1-6. [56] Yang K, Han P, Gong R, et al. High-quality 3D shape recovery from scattering scenario via deep polarization neural networks [J]. Optics and Lasers in Engineering, 2024, 173: 107934. doi: 10.1016/j.optlaseng.2023.107934 [57] Li D, Lin B, Wang X, et al. High-performance polarization remote sensing with the modified U-net based deep-learning network [J]. IEEE Transactions on Geoscience and Remote Sensing, 2022, 60: 1-10. [58] Almahairi A, Rajeshwar S, Sordoni A, et al. Augmented cyclegan: Learning many-to-many mappings from unpaired data [C]//International Conference on Machine Learning, PMLR, 2018: 195-204. [59] Zhu J Y, Park T, Isola P, et al. Unpaired image-to-image translation using cycle-consistent adversarial networks [C]//Proceedings of the IEEE International Conference on Computer Vision, 2017: 2223-2232. [60] Qi P, Li X, Han Y, et al. U2R-pGAN: Unpaired underwater-image recovery with polarimetric generative adversarial network [J]. Optics and Lasers in Engineering, 2022, 157(10): 107112. doi: 10.1016/j.optlaseng.2022.107112 [61] Zoph B, Ghiasi G, Lin T Y, et al. Rethinking pre-training and self-training [J]. Advances in Neural Information Processing Systems, 2020, 33: 3833-3845. [62] Shi Y, Guo E, Bai L, et al. Polarization-based haze removal using self-supervised network [J]. Frontiers in Physics, 2022, 9: 789232. doi: 10.3389/fphy.2021.789232 [63] Zhu Y, Zeng T, Liu K, et al. Full scene underwater imaging with polarization and an untrained network [J]. Optics Express, 2021, 29(25): 41865-41881. doi: 10.1364/OE.444755 [64] Liang Jian, Ju Haijuan, Zhang Wenfei, et al. Review of optical polarimetric dehazing technique [J]. Acta Optica Sinica, 2017, 37(4): 0400001. (in Chinese) doi: 10.3788/AOS201737.0400001 [65] Liang J, Ren L, Qu E, et al. Method for enhancing visibility of hazy images based on polarimetric imaging [J]. Photonics Research, 2014, 2(1): 38-44. doi: 10.1364/PRJ.2.000038 [66] Hu H, Han Y, Li X, et al. Physics-informed neural network for polarimetric underwater imaging [J]. Optics Express, 2022, 30(13): 22512-22522. doi: 10.1364/OE.461074 [67] Lin B, Fan X, Peng P, et al. Dynamic polarization fusion network (DPFN) for imaging in different scattering systems [J]. Optics Express, 2024, 32(1): 511-525. doi: 10.1364/OE.507711 [68] Li S, Ye W, Liang H, et al. K-SVD based denoising algorithm for DoFP polarization image sensors [C]//2018 IEEE International Symposium on Circuits and Systems (ISCAS). IEEE, 2018: 1-5. [69] Buades A, Coll B, Morel J M. A non-local algorithm for image denoising [C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2005: 60-65. [70] Dabov K, Foi A, Katkovnik V, et al. Image denoising by sparse 3-D transform-domain collaborative filtering [J]. IEEE Transactions on Image Processing, 2007, 16(8): 2080-2095. doi: 10.1109/TIP.2007.901238 [71] Li X, Li H, Lin Y, et al. Learning-based denoising for polarimetric images [J]. Optics Express, 2020, 28(11): 16309-16321. doi: 10.1364/OE.391017 [72] Usmani K, O’Connor T, Javidi B. Three-dimensional polarimetric image restoration in low light with deep residual learning and integral imaging [J]. Optics Express, 2021, 29(18): 29505-29517. doi: 10.1364/OE.435900 [73] Zhang K, Zuo W, Chen Y, et al. Beyond a Gaussian denoiser: Residual learning of deep CNN for image denoising [J]. IEEE Transactions on Image Processing, 2017, 26(7): 3142-3155. doi: 10.1109/TIP.2017.2662206 [74] Hu H, Lin Y, Li X, et al. IPLNet: a neural network for intensity-polarization imaging in low light [J]. Optics Letters, 2020, 45(22): 6162-6165. doi: 10.1364/OL.409673 [75] Yosinski J, Clune J, Bengio Y, et al. Advances in neural information processing systems [C]//Proceedings of the 27th International Conference on Neural Information Processing Systems, 2014: 3320–3328. [76] Hu H, Jin H, Liu H, et al. Polarimetric image denoising on small datasets using deep transfer learning [J]. Optics & Laser Technology, 2023, 166: 109632. [77] Hu Haofeng, Jin Huifeng, Li Xiaobo, et al. Polarization image denoising based on unsupervised learning [J]. Acta Optica Sinica, 2023, 43(4): 0410001. (in Chinese) [78] Lehtinen J, Munkberg J, Hasselgren J, et al. Noise2Noise: Learning image restoration without clean data [DB/OL]. (2018-03-12) [2024-02-29]. https://arxiv.org/abs/1803.04189. [79] Liu H, Li X, Cheng Z, et al. Pol2Pol: self-supervised polarimetric image denoising [J]. Optics Letters, 2023, 48(18): 4821-4824. doi: 10.1364/OL.500198 [80] Liu H, Zhang Y, Cheng Z, et al. Attention-based neural network for polarimetric image denoising [J]. Optics Letters, 2022, 47(11): 2726-2729. doi: 10.1364/OL.458514 [81] Liu H, Li X, Cheng Z, et al. Polarization maintaining 3-D convolutional neural network for color polarimetric images denoising [J]. IEEE Transactions on Instrumentation and Measurement, 2023, 72: 1-9. -

下载:

下载: