-

三维成像技术能够获取和记录完整的场景几何信息,在场景三维重构、高精度定位、工业检测等领域应用广泛。三维成像方式有很多,如结构光视觉[1-2]、双目视觉[3-4]、光度立体视觉[5]、激光三维成像雷达[6-7]、Time-of-Flight(ToF)相机[8]等。ToF相机与其他三维成像技术相比,具有体积小、成本低、实时深度成像等优势[9],其利用主动深度成像技术,基于光在相机和目标之间的飞行时间测量场景的深度信息,广泛应用于机器视觉[10-11]、人机交互[12]、工业自动化[13]、自动驾驶[14]、目标识别[15]等领域。然而,ToF相机在雾天、水下、生物组织等散射场景中成像时,传感器接收的返回信号是目标反射光和散射光的混合信号,深度测量误差较大[16],这限制了ToF相机在水下形貌勘测、雾天自动驾驶、生物医学等领域中的应用,因此如何提高ToF相机在散射场景中的成像质量引起了国内外学者的关注。

ToF透散射介质成像在本质上属于多径干扰的校正,多径干扰描述的是ToF相机上单个像素接收到来自场景返回的多路光信号[17],而ToF成像原理中假设ToF传感器上的单个像素只接收来自场景的单路返回光信号,因此多径干扰会造成深度测量的误差较大。目前已有很多针对多径干扰校正的研究,如混合像素分离[18-19]、压缩感知[20-22]、多频分离[23-25]、深度学习[26-28]等。由于ToF透散射介质成像具有其独特的性质,因此一些研究针对散射场景中多径干扰的校正开展,文中重点介绍由散射引起的多径干扰校正方法。ToF透散射介质成像研究分为稳态成像和瞬态成像两个领域:稳态成像采集和记录的是光到达稳态后的场景信息,一般商用ToF相机属于稳态成像系统;瞬态成像能够记录光传播瞬间的场景信息,采集和存储光在场景中传播过程的序列图像,其通常需要对ToF相机进行硬件修改或进行复杂的计算,以恢复精确的时间响应。

文中首先介绍ToF相机的基本结构及成像原理,分别介绍散射场景中ToF稳态成像和瞬态成像过程、透散射介质成像的理论和相关研究,之后介绍ToF透散射介质成像的应用前景,最后结合国内外研究现状,对未来ToF相机透散射介质成像研究的发展趋势进行预测和展望。

-

ToF相机最早起源于1977年,采用单像素传感器逐点扫描场景以获取深度信息[29]。1995年,锁相CCD出现[30],使得同时获取场景各点深度信息成为可能,并应用于第一台非扫描的ToF相机—PMD[31]。ToF相机依据是否直接获取光的飞行时间分为直接式ToF(direct-ToF,简称d-ToF)相机和间接式ToF(indirect-ToF,简称i-ToF)[32]相机。d-ToF相机发射光脉冲信号探测场景,其内部的计时器记录光信号在发射和接收之间的时间差,获得光在场景目标和相机之间的飞行时间,从而计算出目标和相机之间的距离。d-ToF相机测量精度比i-ToF相机高,测距范围较远,但是其图像分辨率较低,制作工艺较难,成本较高,目前已应用于车载激光雷达、增强现实(Augmented Reality,简称 AR)或虚拟现实(Virtual Reality,简称VR)等领域。此外,由于不同光路达到探测器的时间不同,所以d-ToF在散射场景中成像时可通过设置时间门控,减少传感器对散射光的接收,其受到多径干扰的影响较小。因此,ToF透散射介质成像研究通常是针对i-ToF开展。

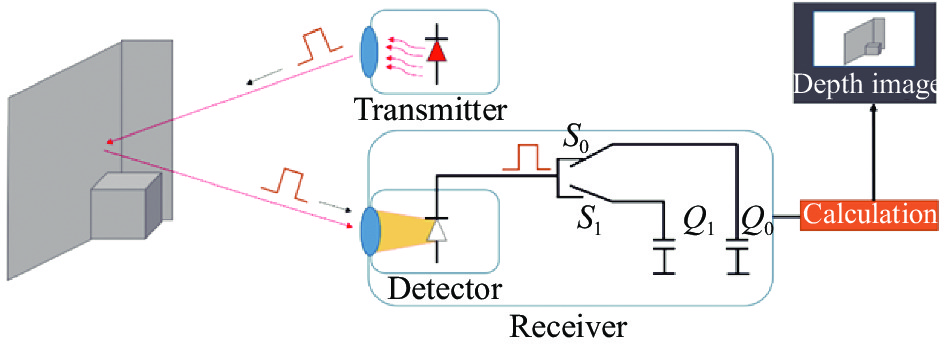

依据是否采用相关测量,i-ToF相机分为脉冲调制式ToF(Pulse-based ToF,简称PL-ToF)和连续波调制式ToF(Continuous-wave ToF,简称CW-ToF)。PL-ToF相机采用脉冲调制光信号,传感器接收来自探测场景返回的信号,接收电路的光开关

${S_0}$ 和${S_1}$ 交替闭合,对应的电容积累电荷,如图1所示,目标深度与积累电荷之间的关系为:$$ d = \frac{c}{2}{t_p}\frac{{{Q_1}}}{{{Q_0} + {Q_1}}} $$ (1) 式中:

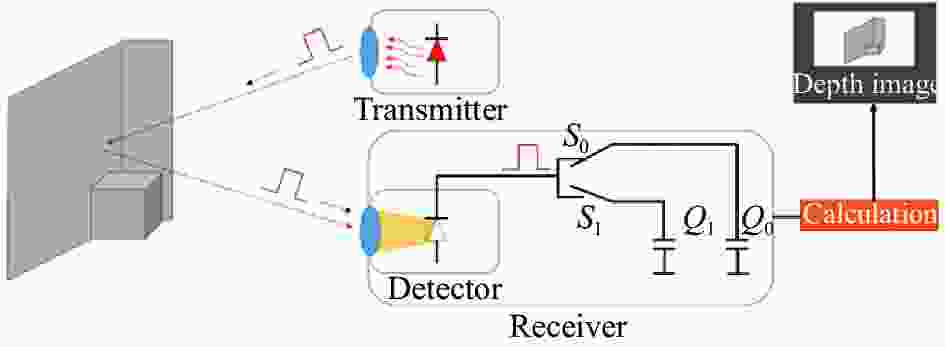

${t_p}$ 为调制信号的脉宽;${Q_0}$ 和${Q_1}$ 分别为开关${S_0}$ 和${S_1}$ 打开期间电容积累的电荷量;$c$ 是真空中的光速。CW-ToF相机成像过程如图2所示,光源经正弦信号调制,调制光信号经场景目标反射后,由ToF传感器接收,接收信号与参考信号进行相关,通过四步相移等方式获得接收信号和参考信号间的相位差,由相位差计算出目标深度[33]。

$$ d = \frac{{c \cdot \varphi }}{{4\pi {f_{\bmod }}}} $$ (2) 式中:

$\varphi $ 为接收信号和参考信号之间的相位差;${f_{\bmod }}$ 为光源的调制频率。两种i-ToF相机各具优势,CW-ToF相机测量精度高,但是计算较复杂、功耗大。PL-ToF相机因其测量原理的限制,在近距离和远距离处误差较大,测量精度偏低,容易受背景噪声和暗噪声影响[34],但是其计算更简单、计算量更低。i-ToF图像分辨率比d-ToF相机高,成本较低,但抗干扰能力较差。由于ToF成像能够提供场景各点的深度信息,有助于判断物体的形貌、距离等,广泛应用于目标识别、三维场景重构、机器视觉、人机交互等领域。

-

ToF透散射介质成像的目标是抑制散射分量的影响或从接收信号中分离目标分量,提升ToF相机在散射场景中的成像质量。ToF稳态成像是直接基于商用ToF相机获取场景信息,而不需要恢复精确的时间响应。在ToF稳态成像的透散射介质成像中,由于PL-ToF和CW-ToF成像原理不同,所以国内外学者针对不同的成像系统,研究了不同的深度图像恢复方法。

散射场景中ToF稳态成像的过程可描述为相机的光源经信号调制后照亮场景,经场景目标反射后,由传感器接收。从信号处理的角度,这一过程可表示为发射信号

${L_{{\rm{emit}}}}\left( t \right)$ 和场景响应$i\left( t \right)$ 的卷积,即:$$ {L_{{\rm{receive}}}}\left( t \right) = {L_{{\rm{emit}}}}\left( t \right) * i\left( t \right) $$ (3) 当探测场景存在散射介质时,由于散射介质对光的散射作用,传感器接收信号是目标反射光和散射光的混叠信号,如图3所示,此时,

$i\left( t \right) = {i_{{\rm{reflect}}}}\left( t \right) + {i_{{\rm{scatter}}}}\left( t \right)$ ,深度计算的误差较大。因此,在散射场景中,ToF成像因受到多径干扰的影响,会出现物体距离的错误估计、三维形貌被扭曲等现象,不利于其在雾天、水下等场景中的应用,所以ToF透散射介质成像能够更准确地获取散射场景中的有效深度信息,具有重要意义及应用前景。 -

PL-ToF相机的光源经方波脉冲调制,在无散射介质的场景中,返回信号

${L_{{\rm{receive}}}}\left( t \right)$ 只受到场景目标的影响,返回幅值与目标反射率成正比,与发射信号${L_{{\rm{emit}}}}\left( t \right)$ 之间的时间延迟与目标实际距离相关(图4 (a))。在有散射介质存在的场景中,返回信号${L_{{\rm{receive}}}}\left( t \right)$ 受到光散射的影响,是目标分量和散射分量多个回波信号的叠加,波形发生畸变,不再保持方波波形,在利用公式(1)进行深度解算时,存在较大误差,如图4 (b)所示。

图 4 PL-ToF成像的发射和接收光信号的波形图。(a)清晰场景中的波形图;(b)散射场景中的波形图

Figure 4. The sent and received waveform of the light in PL-ToF imaging. (a) The waveform in a clear scene; (b) The waveform in a scattering scene

Laurenzis M等人采用三维距离选通成像系统最先对这一过程进行物理建模,将传感器接收信号响应表示为[35]:

$$ \begin{split} {L_{\rm receive}} =& {L_{\rm obj}} + {L_{\rm bs}} + {L_{\rm fs}} \approx \frac{{I\left( {{d_0}} \right)}}{{d_0^2}}{r_{\rm obj}}{\varGamma _{\rm GV}}\left( {{d_0}} \right) +\\ & \displaystyle\int_0^{{d_0}} {\frac{{I\left( z \right)}}{{z_{}^2}}{\rho _s}{\varGamma _{\rm GV}}\left( z \right)dz} + {L_{fs}} \end{split} $$ (4) 式中:

${L_{{\rm{obj}}}}$ 为目标反射信号;${L_{{\rm{bs}}}}$ 为后向散射信号;${L_{{\rm{fs}}}}$ 为前向散射信号;${d_0}$ 为目标与传感器之间的距离;${r_{{\rm{obj}}}}$ 是目标的反射率;${F_{{\rm{GV}}}}$ 是光源脉冲响应和返回信号响应的卷积;${\rho _{\rm{s}}}$ 是局部散射截面。该模型为后续PL-ToF透散射介质成像提供了理论基础,有利于从散射光对深度成像的机理出发抑制多径干扰的影响。之后,Illig D W等人采用概率分布函数描述公式(4)的过程,依据后向散射和目标反射信号的概率分布函数的差异,采用独立成分分析法[36]分离目标分量,在单次散射反照率为0.9的浑浊水下环境中实现了距离相机1.35~3.05 m处的目标深度信息恢复。该方法可用于强散射环境中微弱目标信号的恢复,但是当目标信号峰值低于后向散射信号最低值时方法失效,且只适用于目标信号为非高斯分布,而后向散射信号为高斯分布的情况。Kijima D等人进一步细化了雾天PL-ToF成像模型[37],基于该模型的实验装置及实验结果如图5 所示。该模型中将场景响应$i\left( t \right)$ 描述为:$$ \begin{gathered} {i_{{\rm{reflect}}}}\left( t \right) = \dfrac{1}{{d_0^2}}{r_{{\rm{obj}}}}{{\rm{e}}^{ - 2{\sigma _t}{d_0}}}\delta \left( {t - \dfrac{{2d}}{c}} \right){\rm{d}}t \\ {i_{{\rm{scatter}}}}\left( t \right) = \left\{ {\begin{array}{*{20}{c}} {\begin{array}{*{20}{c}} {\dfrac{1}{{{{{z}}^2}}}\omega {\sigma _t}p\left( {g,\theta } \right){{\rm{e}}^{ - 2{\sigma _t}{{z}}}}{\rm{d}}{{z}}}&{0 \lt t \leqslant 2{{{{{d}}}}_0}/c} \end{array}} \\ {\begin{array}{*{20}{c}} 0&{}&{\begin{array}{*{20}{c}} {\begin{array}{*{20}{c}} {}&{} \end{array}}&{}&{}&{} \end{array}}&{{\rm{otherwise}}} \end{array}} \end{array}} \right. \\ \end{gathered} $$ (5) 式中:

${\sigma _t}$ 是消光系数,${\sigma _t} = {\sigma _s} + {\sigma _a}$ ,${\sigma _s}$ 为散射系数,${\sigma _a}$ 吸收系数;$\delta $ 为狄拉克函数;$\omega $ 为单散射反照率,$\omega = {\sigma _s}/{\sigma _t}$ ;$p\left( {g,\theta } \right)$ 为相函数,其与散射角$\theta $ 和各向异性参数$g$ 有关,参考文献[38]研究了这一函数,并给出了其表示形式为$p\left( {g,\theta } \right) = \left( {1 - {g^2}} \right)/4 {{\pi}} {\left( {1 + {g^2} - 2 g\cos \theta } \right)^{\tfrac{3}{2}}}$ 。Kijima D等人增加了一个时间门控在光源发射信号后立即打开,以接收只包含散射分量的信号,并依据公式(5)估计雾的散射特性,实现在能见度为10 m的雾天场景中强度和深度图像恢复,如图6所示,振幅图像的峰值信噪比为27.04,深度图像在2.9 m的目标处的绝对均值误差为0.07 m。该方法能够有效估计散射分量,实时恢复雾天场景的深度图像,该成果在雾天自动驾驶领域有广阔的应用前景。

图 6 CW-ToF成像的发射和接收光信号的波形图。(a)清晰场景中的波形图;(b)散射场景中的波形图

Figure 6. The sent and received waveform of the light in CW-ToF imaging. (a) The waveform in a clear scene; (b) The waveform in a scattering scene

参考文献[39]研究了水下场景中ToF透散射介质成像,与其他研究不同,其主要关注前向散射对ToF成像的影响。该研究采用时间选通抑制后向散射,并采用贝叶斯概率模型从受到前向散射干扰的返回信号中识别反射脉冲,利用邻近像素信息重新配置深度信息,实现了在海湾、沿海和深海三种水下环境中距离相机7~10 m的目标的深度信息恢复。其中,在10 m距离处的深度恢复图像的偏差在2.83%。该技术能够在水下实时恢复场景的深度信息,在水下形貌勘测等领域有可观的应用价值。

-

2018年,Consani等人首次探究了雾对CW-ToF成像的影响,在Zemax上基于光线追迹模拟了雾天ToF成像过程,指出误差主要来自光到达场景前的后向散射[40]。该研究为雾天CW-ToF透散射介质成像提供了理论和仿真基础,有助于理解气溶胶粒子对CW-ToF成像的影响。CW-ToF相机光源的振幅经正弦信号调制,发射光信号

${L_{{\rm{emit}}}}\left( t \right)$ 可表示为:$$ {L_{{\rm{emit}}}}\left( t \right) = {a_0}\sin \left( {2{{\pi}} ft + {\varphi _0}} \right) $$ (6) 式中:

${a_0}$ 是发射光信号的振幅;$f$ 是光源的调制频率;${\varphi _0}$ 是初始相位。在无散射介质的场景中,传感器接收信号与发射信号之间的相位差$\Delta \varphi $ 只与目标的实际距离和光源调制频率相关,$\Delta \varphi = 4{{\pi}} f{d_0}/c$ (图6 (a))。在有散射介质的场景中,传感器接收信号为目标反射光信号和散射光信号的混叠信号,如图6 (b)所示。为简洁表述,假设初始相位${\varphi _0} = 0$ ,则接收信号表示为:$$ \begin{split} {L_{{\rm{receive}}}}\left( t \right) =& {a_t}\sin \left( {2{{\pi}} ft + {\varphi _t}} \right) +\\& \sum\limits_i {{a_{si}}\sin \left( {2{{\pi}} ft + {\varphi _{si}}} \right)} \end{split} $$ (7) 式中:

${a_t}$ 是目标反射光信号的振幅;${a_t} = {a_0}{r_{{\rm{obj}}}}{{\rm{e}}^{ - 2{\sigma _t}{d_0}}}$ ;${\varphi _t}$ 是目标反射光信号的相位,${\varphi _t} = 4 {{\pi}} f{d_0}/c$ ;${a_{si}}$ 是第$i$ 个散射光路的散射光信号振幅;${\varphi _{si}}$ 是第$i$ 个散射光路的散射光信号相位。此时,接收信号的相位差$\Delta \varphi $ 不再是目标反射光信号和发射信号之间的相位差,受到散射分量的影响。为了更好地描述CW-ToF测量数据,Gupta M等人提出了相量表示方法[41],相量的长度表示接收信号的振幅

$a$ ,相量与横轴正方向的夹角表示为相位$\varphi $ ,即:$$ p = a \cdot {{\rm{e}}^{j\varphi }} $$ (8) 其研究发现,当光源调制频率高于全局传输带宽时,ToF相机接收到的多径干扰分量可近似为直流分量,从而可以实现散射场景中散射分量的抑制。Takeshi Muraji等人进一步扩展了基于相量表示的透散射介质成像方法,其基于位于同一深度的目标受到散射分量影响相同的思想,采用多频测量场景,将位于同一深度的像素进行聚类,通过线性拟合的方式,消除散射分量的影响[42],实现了在目标深度为8.71 m时恢复的深度图像的误差为0.23 m。如图7所示,该方法不再对调制频率的范围有限制,且无需繁琐的迭代优化过程,适用于同一深度至少存在两个反射率的目标的场景,在雾天自动驾驶等户外场景中有很大的应用价值。

参考文献[43]和[44]同样采用相量表示,基于局部二次先验和全局对称先验,采用迭代加权最小二乘法估计散射分量。该方法适应于包含目标前景和背景的场景,且目标需要与相机之间有足够的距离,以满足相机接收的信号中目标前的散射分量达到饱和,从而能够采用背景区域的散射分量近似目标区域的散射分量,实验结果表明该方法恢复深度图像的绝对误差均值最小为1.8 cm。该方法适应于包含目标前景和背景的场景,且目标需要与相机之间有足够的距离,以满足相机接收的信号中目标前的散射分量达到饱和,从而能够采用背景区域的散射分量近似目标区域的散射分量。由于该方法在目标充满整个相机视场、散射介质非均匀或动态散射场景时无法恢复场景深度,因此在实际应用中,该技术仍需进一步优化和改进。笔者课题组研究了偏振相量成像法,将偏振光学去雾成像从可见光成像领域引入到CW-ToF透散射介质成像中,提出了偏振度相量的概念,以描述散射场景中ToF测量数据的偏振特性,基于偏振度相量从背景区域估计出散射分量,从而实现雾天环境中振幅和深度图像的恢复,深度恢复图像的绝对误差均值最小为0.01 m[45]。偏振相量成像法的实验装置如图8所示,采用时序型偏振成像系统,通过旋转传感器前的偏振片,采集不同偏振度下的振幅和相位图像。

此外, Lu H等人同时考虑了散射场景的可见光信息和深度信息,采用水下中值双通道先验估计出粗略的深度图像,并采用图像插值方法弥补丢失的深度信息,结合可见光去雾图像进行深度图像滤波,提升水下深度成像质量[46],如图9所示。该方法综合考虑了场景的深度信息和颜色信息,但是没有考虑散射光对深度图像的退化机理,其在海底三维重构领域有重要的应用价值。

在ToF稳态成像领域,国内外学者针对不同的ToF成像原理采用不同的方式提升散射场景中ToF成像质量。基于PL-ToF相机的透散射介质成像主要抑制散射光对脉冲延迟测量的影响,而基于CW-ToF相机的透散射介质成像主要基于相量表示分离散射分量。表1对不同方法的性能进行总结,当前深度恢复精度在厘米量级,在未来仍需进一步提升。

表 1 ToF稳态成像领域的不同研究方法对比

Table 1. Different methods comparation in ToF stable imaging

Camera Approach Performance PL-ToF Multiple time-gated exposures[37] The absolute mean error is 0.07 m at 2.9 m Bayesian reconstruction[39] The image deviation is 2.83% at 10 m CW-ToF Multi-frequencies phasor imaging[42] The image error is 0.23 m at 8.71 m Iterative optimization[43--44] The minimum value of mean absolute error is 1.8 cm Polarization phasor imaging[45] The minimum value of mean absolute error is 0.01 m at 1 m -

瞬态成像描述了光在场景中传播的瞬间,有利于分析复杂环境中光的传播路径。基于ToF相机的瞬态成像研究最早开始于2013年,Heide等人对商用CW-ToF相机进行了硬件修改,建立了ToF时域瞬态成像模型,在多个调制频率和相位下测量场景,通过计算重构瞬态图像[47],如图10所示。瞬态图像是由一系列瞬态像素

${\alpha _{x,y}}\left( \tau \right)$ 构成的[48],下标$x,y$ 为传感器像素坐标,其描述了场景在极短光脉冲照明下像素强度随时间的变化。由于时域瞬态成像模型难以分析噪声问题,且计算成本较高,后续又发展了频域瞬态成像模型[49]和压缩感知瞬态成像模型[50]。ToF瞬态成像的透散射介质成像过程如图11所示,光源发射光信号

$g\left( t \right)$ 照亮场景,ToF传感器接收的返回信号中,除了经散射介质衰减的目标反射光信号外,还有散射光信号,与相机内参考光信号$f\left( {t + \phi } \right)$ 相关后,得到最终的测量信号为:$$ {g_{{\rm{measure}}}} = f\left( {t + \phi } \right) \otimes \int\limits_p {{\alpha _{{p_i}}}g\left( {t - {\tau _{{p_i}}}} \right)} {\rm{d}}p $$ (9) 式中:

${\alpha _{{p_i}}}$ 为第${p_i}$ 条路径上目标的反射率;${\tau _{{p_i}}}$ 为第${p_i}$ 条路径上信号返回的时间延迟。在瞬态成像中,ToF透散射介质成像目标是从测量值${g_{{\rm{measure}}}}$ 中分离出目标反射光的路径,重构出瞬态图像$\alpha $ 。

图 11 散射场景中ToF瞬态成像过程示意图

Figure 11. Schematic diagram of ToF transient imaging process in a scattering scene

为了恢复散射场景中的瞬态图像,Heide F等人采用卷积稀疏编码的方式,采用修正的高斯函数描述瞬态像素,构建过完备基,并建立基追踪模型以重构瞬态图像[51]。该方法在不同浓度的牛奶和水的混合液体中进行,恢复最小误差在1~5 cm内,其能够在一定程度上抑制散射退化,但对真实物理过程的保真度不高,且计算成本较高。Wu R H等人基于光的偏振特性,采用主动偏振瞬态成像系统,提出了瞬态偏振度的概念,在偏振特性均匀的散射介质中恢复瞬态图像[52],其实验装置及重构效果如图12所示。参考文献[53]进一步扩展了这一项研究,采用自适应偏振差分方法实现瞬态成像。偏振瞬态成像方法利用了光的偏振特性,有效抑制了散射光对瞬态图像重构的影响,在浓度为0.1~10 mfp的散射介质中,恢复瞬态图像的误差不超过0.5 m。但是该方法需要长时间采集大量的数据以重构瞬态图像,不适于动态、非稳定的散射场景中,因此,其应用到真实水下场景还需进一步研究,减少方法的运行时间。

此外,Wu R H等人将稳态成像中单次散射模型扩展到瞬态成像领域,采用偏移拍摄法求解深度,重构瞬态图像[54],其提出的瞬态成像的单次散射模型为:

$$ \begin{split} S\left( t \right) =& \frac{{I\beta {{\rm{e}}^{ - 2\beta x\left( t \right)}}}}{{4\pi {{\left( {c{t_0}/2 + x\left( t \right)} \right)}^2}}}{1_{t \in \left[ {{t_0},{t_R}} \right)}} + \\& \frac{{I\rho {{\rm{e}}^{ - 2\beta x\left( {{t_R}} \right)}}}}{{4{{\pi}}{{\left( {c{t_0}/2 + x\left( {{t_R}} \right)} \right)}^2}}}\delta \left( {t - {t_R}} \right) \end{split} $$ (10) 式中:第一项为散射分量,第二项为反射分量;

$I$ 为光源光强;$\;\beta $ 为散射系数;${e^{ - 2\beta x\left( t \right)}}$ 为衰减项;${t_0}$ 为光从相机到散射介质表面的飞行时间;${1_{t \in \left[ {{t_0},{t_R}} \right)}}$ 在$t \in \left[ {{t_0},{t_R}} \right)$ 内取1,其他范围取0;${t_R}$ 为光在散射介质中从散射介质表面到目标的飞行时间;$x\left( t \right) = c\left( {t - {t_0}} \right)/2$ ,$n$ 为折射率。该方法在60 L水中混入15 mL的牛奶的场景中重构的瞬态图像的峰值信噪比为34.4065。该项研究进一步丰富了散射场景中瞬态图像重构模型,重构出目标的深度和纹理,在火灾救援、工业检测、水下勘测等领域有重要的应用价值。在瞬态成像领域,国内外学者采用了卷积稀疏编码、偏振瞬态成像和建立单散射瞬态成像模型等方式恢复散射场景中的瞬态图像,不同方法各具特色,其性能总结于表2中。当前瞬态成像方法较难实现实时成像,散射场景中深度恢复精度在厘米量级。

表 2 ToF瞬态成像领域的不同研究方法对比

Table 2. Different methods comparation in ToF transient imaging

-

ToF相机能够获取场景目标的距离、纹理等形貌信息,在自动驾驶、人机交互等领域已展示了其应用价值,其透散射介质成像的潜在应用前景如下:

(1)工业自动化。Behrje U等人将ToF相机安装在叉车的顶部,以实现在仓库中叉车的自动驾驶[9]。当仓库环境中烟尘较为严重时,ToF深度测量受到多径干扰的影响,会引起自动驾驶中的错误定位及与前方障碍物距离的错误估计,因此,ToF透散射介质成像研究有助于推动ToF相机在工业生产自动化中的应用。

(2)自动驾驶。2021年,Niskanen I等人基于BIM检测移动车辆,并对车辆进行三维建模[14],如图13所示。在晴朗的天气条件下,实现了90%的检测准确率,但是其性能会因雾天气溶胶粒子对光的散射作用而降低。因此ToF透散射介质成像研究能够进一步推动ToF相机在道路交流流量监测、物流监测、自动驾驶的应用。

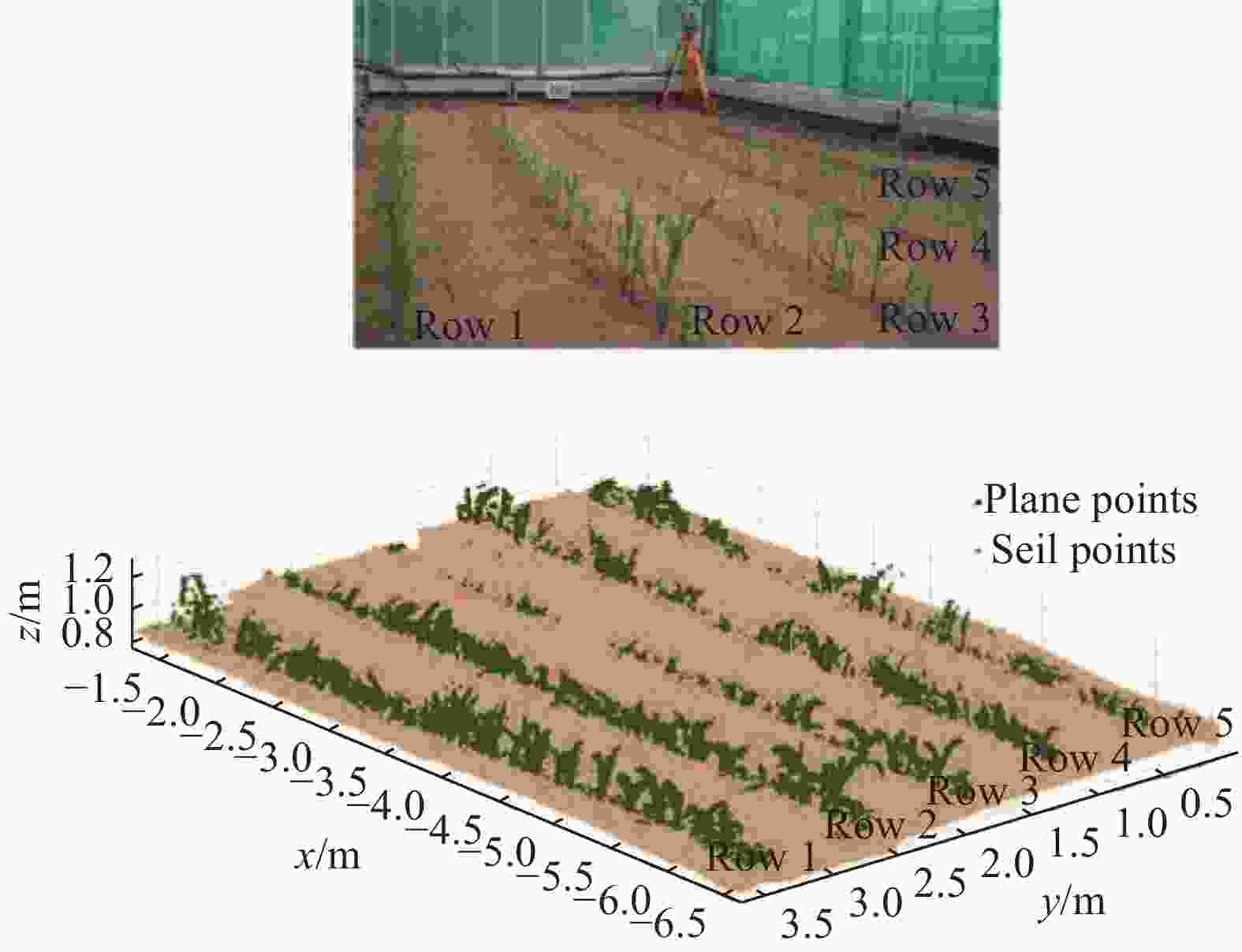

(3)农业生产。2011年,Klose R等人使用ToF相机评估植物的表现型,能够在植物培育、种植期间监测植物生长状态,优化养殖策略[55]。2014年,Kazmi W等人测试了三种ToF相机对植物叶片成像的性能,推动了ToF相机在农业自动化生产领域的应用[56]。2016年,Dionisio A等人基于Kinect相机,采用高度选择和RGB分割方法分离作物和杂草[57]。2018年,参考文献[58]对玉米进行三维重构,如图14所示。以评估玉米的生长状况,更好地进行种植管理。在户外场景中,雾霾天气会影响ToF重构质量,对植物的评估效果,因此ToF透散射介质成像研究在农业生产领域有重要的应用价值。

(4)水下勘测。2014年,美国华盛顿大学Tsui C L等人首次将ToF相机用于水下目标深度探测,基于商业ToF相机进行硬件修改,实现水下深度图像和点云图像的采集[59]。Atif Anwer 等人基于光折射的物理模型,结合Kinect在水下获得的RGB、深度图像和近红外强度图像,实现水下目标的三维成像[60]。Digumarti S T等人在巴哈马群岛的珊瑚园区对水下珊瑚进行三维重构,观测珊瑚的生态体积如图15所示[57]。在浑浊的水下环境中,ToF相机因受到散射光的影响,重构质量显著下降,因此ToF透散射介质成像研究有利于提升水下三维重构质量,推动ToF相机进一步应用于不同的水下环境进行形貌勘测等任务。

-

ToF成像是一种主动深度成像技术,在散射环境进行ToF成像时,由于散射介质对光的散射作用,ToF深度测量会受到多径干扰,测量误差较大。很多研究针对ToF在散射场景中多径干扰的校正进行开展,依据是否恢复精确的时间响应,分为稳态成像领域和瞬态成像领域。文中对这两个领域中,ToF相机在散射环境下成像过程进行介绍和分析,分别介绍了两个领域的ToF透散射介质成像方法。与稳态成像相比,瞬态成像能够记录更丰富的场景信息,有助于理解复杂环境中光的传播过程,但是其成本较高,难以实时成像。稳态成像一般基于商业ToF相机采集数据,无需恢复精确的时间响应,成本较低。目前,ToF相机透散射介质成像领域仍处于起步阶段,各种方法已有效提升散射环境中ToF的成像质量,但是由散射介质引起的多径干扰很难完全消除且在散射介质非均匀分布的场景图像恢复精确度降低,各种方法的鲁棒性、恢复精确度仍有待进一步提升,未来研究应向着低成本、高精度的恢复效果进一步发展。

结合当前国内外研究现状, ToF透散射介质成像未来研究方向可从以下三个方面进一步研究和完善:

(1) ToF透散射介质成像理论研究。目前已在稳态成像领域和瞬态成像领域建立了一些成像模型,但是这些成像模型均存在一些自身的局限性,比如运算时间长、散射介质的空间均匀性等,这些限制了其在实际场景中的应用。因此,在未来可借鉴其他透散射成像领域的理论,如可见光透散射介质成像理论[62-64],进一步丰富ToF透散射介质成像的理论和技术,进一步推动ToF透散射介质成像在实际场景中的应用。

(2)基于深度学习方法的ToF深度透散射介质成像。深度学习在图像处理中展现出强大的处理能力,一些深度学习网络已应用于ToF相机的MPI校正中[27-28],并获得不错的处理效果。目前尚未建立针对散射环境中ToF成像特性的深度学习网络,因此,未来基于深度学习方法的ToF深度透散射介质成像是一个值得研究的方向。

(3) ToF与其他技术相融合的透散射介质成像。由于不同探测器具有其自身独特的优势[65],不同探测器数据融合一直是计算机视觉领域的研究热点。目前,已有学者采用RGB-D相机实现水下深度成像[46],在未来可以结合ToF与其他成像技术,更好地利用不同探测器的优势,实现更鲁棒的透散射介质成像。

Review of Time-of-Flight imaging through scattering media technology

-

摘要: Time-of-Flight (ToF)成像是利用光在目标和相机之间的飞行时间来获取场景的深度信息,具有体积小、成本低、实时成像等优势。在散射环境中,由于散射介质对光的散射作用,ToF成像受到多径干扰的影响,深度测量误差较大,限制了ToF相机在散射场景中的应用。ToF透散射介质成像技术是校正因散射光引起的多径干扰效应,从传感器接收到的混叠信号中分离出目标分量,实现散射场景中的深度信息恢复,其在雾天自动驾驶、水下勘测、生物医学等领域具有广阔的应用前景。依据ToF成像系统的不同,详细介绍了PL-ToF和CW-ToF成像的基本原理,阐述和分析了散射场景中ToF稳态成像和瞬态成像的机理和特点,分别回顾和总结了ToF稳态成像和瞬态成像的透散射介质成像研究现状,并介绍了ToF透散射介质成像的应用前景,最后依据现有ToF透散射介质成像技术的优缺点,对未来发展趋势进行了展望。

-

关键词:

- 成像系统 /

- 透散射介质成像 /

- Time-of-Flight相机 /

- 稳态成像 /

- 瞬态成像

Abstract: Time-of-Flight (ToF) imaging senses the depth information of the scene using the time of light traveling from the target to the camera, with the advantage of compact construction, low cost and real-time imaging. In the scattering scene, the depth measurement of ToF imaging is affected by multipath interference due to the light scattered by the scattering medium, which results in large depth measurement errors, and limits the application of ToF imaging in the scattering scene. ToF imaging through scattering media can effectively correct the multipath interference caused by scattered light via separating the target component from the mixed signal received by a ToF sensor for recovering the depth information of a scattering scene, which is promisingly applied in foggy autonomous driving, underwater surveys, biomedicine, and other fields. Herein, the principle of PL-ToF and CW-ToF imaging is introduced in detail according to the difference of ToF imaging system, and the mechanisms and characteristics of ToF stable and transient imaging in a scattering scene are introduced and analyzed. The researches of ToF imaging through scattering media in stable and transient imaging are reviewed and summarized, respectively. In addition, the potential applications of ToF imaging through scattering media are presented. Finally, the future development trend is prospected according to the advantages and disadvantages of existing ToF imaging through scattering media. -

表 1 ToF稳态成像领域的不同研究方法对比

Table 1. Different methods comparation in ToF stable imaging

Camera Approach Performance PL-ToF Multiple time-gated exposures[37] The absolute mean error is 0.07 m at 2.9 m Bayesian reconstruction[39] The image deviation is 2.83% at 10 m CW-ToF Multi-frequencies phasor imaging[42] The image error is 0.23 m at 8.71 m Iterative optimization[43--44] The minimum value of mean absolute error is 1.8 cm Polarization phasor imaging[45] The minimum value of mean absolute error is 0.01 m at 1 m 表 2 ToF瞬态成像领域的不同研究方法对比

Table 2. Different methods comparation in ToF transient imaging

-

[1] Jang W, Je C, Seo Y, et al. Structured-light stereo: Comparative analysis and integration of structured-light and active stereo for measuring dynamic shape [J]. Optics & Lasers in Engineering, 2013, 51(11): 1255-1264. [2] Xiong H, Zong Z, Chen C. Approach for accurately extracting the full resolution centers of structured light stripe [J]. Optics and Precision Engineering, 2009, 17(5): 1057-1062. (in Chinese) doi: 10.3321/j.issn:1004-924X.2009.05.019 [3] Riordan A O, Newe T, Toal D, et al. Stereo vision sensing: Review of existing systems[C]//International Conference on Sensing Technology, 2018: 178-184. [4] Sun J, Wu Z, Liu Q, et al. Field calibration of stereo vision sensor with large FOV [J]. Optics and Precision Engineering, 2009, 17(3): 633-640. (in Chinese) doi: 10.3321/j.issn:1004-924X.2009.03.027 [5] Wang Q H, Wang S, Li B, et al. In-situ 3D reconstruction of worn surface topography via optimized photometric stereo [J]. Measurement, 2022, 190: 110679. doi: 10.1016/j.measurement.2021.110679 [6] Bu Y, Du X, Zeng Z, et al. Research progress and trend analysis of non-scanning laser 3D imaging radar [J]. Chinese Optics, 2018, 11(5): 711-727. (in Chinese) doi: 10.3788/co.20181105.0711 [7] Wang F, Tang W, Wang T, et al. Design of 3D laser imaging receiver based on 8×8 APD detector array [J]. Chinese Optics, 2015, 8(3): 422-427. (in Chinese) doi: 10.3788/co.20150803.0422 [8] Lange R, Seitz P. Solid-state time-of-flight range camera [J]. IEEE Journal of Quantum Electronics, 2001, 37(3): 390-397. doi: 10.1109/3.910448 [9] Mufti F, Mahony R. Statistical analysis of measurement processes for time-of-flight cameras[C]//Proceedings of SPIE, 2009, 7447: 720–731. [10] Langmann B, Hartmann K, Loffeld O. Increasing the accuracy of Time-of-Flight cameras for machine vision applications [J]. Computers in Industry, 2013, 64(9): 1090-1098. doi: 10.1016/j.compind.2013.06.006 [11] Kadambi A, Raskar R. Rethinking machine vision time of flight with GHz heterodyning [J]. IEEE Access, 2017, 5: 26211-26223. doi: 10.1109/ACCESS.2017.2775138 [12] Molina J, Escudero-Viñolo M, Signoriello A, et al. Real-time user independent hand gesture recognition from time-of-flight camera video using static and dynamic models [J]. Machine Vision & Applications, 2013, 24: 187-204. [13] Behrje U, Himstedt M, Maehle E. An autonomous forklift with 3D time-of-flight camera-based localization and navigation[C]//2018 15th International Conference on Control, Automation, Robotics and Vision (ICARCV), 2018: 1739-1746. [14] Niskanen I, Immonen M, Hallman L, et al. Time-of-flight sensor for getting shape model of automobiles toward digital 3D imaging approach of autonomous driving-ScienceDirect [J]. Automation in Construction, 2021, 121: 103429. doi: 10.1016/j.autcon.2020.103429 [15] Lu Chunqing, Yang Mengfei, Wu Yanpeng, et al. Research on pose measurement and ground object recognition technology based on C-TOF imaging [J]. Infrared and Laser Engineering, 2020, 49(1): 0113005. (in Chinese) doi: 10.3788/IRLA202049.0113005 [16] Bhandari A, Raskar R. Signal processing for time-of-flight imaging sensors: An introduction to inverse problems in computational 3-D imaging [J]. IEEE Signal Processing Magazine, 2016, 33(5): 45-58. doi: 10.1109/MSP.2016.2582218 [17] Lin J, Liu Y, Hullin M B, et al. Fourier analysis on transient imaging with a multifrequency time-of-flight camera[C]// 2014 IEEE Conference on Computer Vision and Pattern Recognition, IEEE, 2014: 3230-3237. [18] Godbaz J P, Cree M J, Dorrington A A. Mixed pixel return separation for a full-field ranger[C]//Image & Vision Computing New Zealand, Ivcnz International Conference, IEEE, 2009: 1-6. [19] Dorrington A A, Godbaz J P, Cree M J, et al. Separating true range measurements from multi-path and scattering interference in commercial range cameras[C]//Proceedings of SPIE, 2011, 7864: 1-10. [20] Patil S S, Bhade P M, Inamdar V S. Depth recovery in time of flight range sensors via compressed sensing algorithm [J]. International Journal of Intelligent Robotics and Applications, 2020, 4(6): 243-251. [21] Freedman D, Krupka E, Smolin Y, et al. SRA: Fast removal of general multipath for ToF sensors[C]// European Conference on Computer Vision(ECCV 2014), 2014, 8689: 234-249. [22] Jiang B, Jin X, Peng Y, et al. Design of multipath error correction algorithm of coarse and fine sparse decomposition based on compressed sensing in time-of-flight cameras [J]. The Imaging Science Journal, 2020, 67(8): 464-474. [23] Bhandari A, Feigin M, Izadi S, et al. Resolving multipath interference in Kinect: An inverse problem approach[C]//Valencia, Spain Sensors, IEEE, November 2-5, 2014: 614-617. [24] Kirmani A, Benedetti A, Chou P A. SPUMIC: Simultaneous phase unwrapping and multipath interference cancellation in time-of-flight cameras using spectral methods[C]//Multimedia and Expo (ICME), 2013 IEEE International Conference , 2013: 1-6. [25] Whyte R, Streeter L, Cree M J, et al. Resolving multiple propagation paths in time of flight range cameras using direct and global separation methods [J]. Optical Engineering, 2015, 54(11): 1131109. [26] Marco J, Hernandez Q, Muoz A, et al. DeepToF: Off-the-shelf real-time correction of multipath interference in time-of-flight imaging [J]. ACM Transactions on Graphics, 2018, 36(6): 1-12. [27] Su S, Heide F, Wetzstein G, et al. Deep end-to-end time-of-flight imaging[C]//2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2018: 6383-6392. [28] Agresti G, Schaefer H, Sartor P, et al. Unsupervised domain adaptation for tof data denoising with adversarial learning[C]//2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2020: 5579-5586. [29] Nitzan D, Brain A E, Duda R O. The measurement and use of registered reflectance and range data in scene analysis [J]. Proceedings of the IEEE, 1977, 65(2): 206-220. doi: 10.1109/PROC.1977.10458 [30] Spirig T, Seitz P, Vietze O, et al. The lock-in CCD-two-dimensional synchronous detection of light [J]. IEEE Journal of Quantum Electronics, 1995, 31(9): 1705-1708. doi: 10.1109/3.406386 [31] Lange R, Seitz P, Biber A, et al. Time-of-flight range imaging with a custom solid state image sensor[C]//Proceedings of SPIE, 1999, 3823: 180-191. [32] Zanuttigh P, Marin G, Mutto C D, et al. Time-of-flight and structured light depth cameras: Technology and applications[M]. Switzerland: Springer International Publishing, 2016: 27-113. [33] Lu Chunqing, Song Yuzhi, Wu Yanpeng, et al. Theoretical investigation on correlating time-of-flight 3 D sensation error [J]. Infrared and Laser Engineering, 2019, 48(11): 1113002. (in Chinese) doi: 10.3788/IRLA201948.1113002 [34] Bronzi D, Zou Y, Villa F, et al. Automotive three-dimensional vision through a single-photon counting SPAD camera [J]. IEEE Transactions on Intelligent Transportation Systems, 2016, 17(3): 782-795. doi: 10.1109/TITS.2015.2482601 [35] Laurenzis M, Christnacher F, Bacher E, et al. New approaches of three-dimensional range-gated imaging in scattering environments[C]// Proceedings of SPIE, 2011, 8186(1): 818603. [36] Illig D W, Jemison W D, Mullen L J. Independent component analysis for enhancement of an FMCW optical ranging technique in turbid waters [J]. Applied Optics, 2016, 55(31): C25-C33. doi: 10.1364/AO.55.000C25 [37] Kijima D, Kushida T, Kitajima H, et al. Time-of-flight imaging in fog using multiple time-gated exposures [J]. Optics Express, 2021, 29(5): 6453-6467. doi: 10.1364/OE.416365 [38] Henyey L G, Greenstein J L. Diffuse radiation in the Galaxy [J]. Astrophysical Journal, 1941, 93: 70-83. doi: 10.1086/144246 [39] Yin X, Cheng H, Yang K, et al. Bayesian reconstruction method for underwater 3D range-gated imaging enhancement [J]. Applied Optics, 2020, 59(2): 370-379. doi: 10.1364/AO.59.000370 [40] Consani C, Druml N, Dielacher M, et al. Fog effects on time-of-flight imaging investigated by ray-tracing simulations [J]. MPDI, 2018, 2(13): 859. [41] Gupta M, Nayar S K, Hullin M B, et al. Phasor imaging: A generalization of correlation-based time-of-flight imaging [J]. ACM Transactions on Graphics, 2015, 34(5): 1-18. [42] Muraji T, Tanaka K, Funatomi T, et al. Depth from phasor distortions in fog [J]. Optics Express, 2019, 27(13): 18858-18868. doi: 10.1364/OE.27.018858 [43] Fujimura Y, Sonogashira M, Iiyama M. Defogging kinect: Simultaneous estimation of object region and depth in foggy scenes[EB/OL]. (2019-04-01)[2022-05-09]. https://arxiv.org/abs/1904.00558. [44] Fujimura Y, Sonogashira M, Iiyama M. Simultaneous estimation of object region and depth in participating media using a ToF camera [J]. IEICE Transactions on Information and Systems, 2020, 103-D(3): 660-673. doi: 10.1587/transinf.2019EDP7219 [45] Zhang Y, Wang X, Zhao Y, et al. Time-of-flight imaging in fog using polarization phasor imaging [J]. Sensors, 2022, 22(9): 3159. doi: 10.3390/s22093159 [46] Lu H, Zhang Y, Li Y, et al. Depth map reconstruction for underwater kinect camera using inpainting and local image mode filtering [J]. IEEE Access, 2017, 5: 7115-7122. doi: 10.1109/ACCESS.2017.2690455 [47] Heide F, Hullin M B, Gregson J, et al. Low-budget transient imaging using photonic mixer devices [J]. ACM Transactions on Graphics, 2013, 32(4): 45. [48] Smith A M, Skorupski J, Davis J. Transient rendering[R]. Santa Cruz, CA: School of Engineering, University of California, Santa Cruz, 2008. [49] Lin J, Liu Y, Hullin M B, et al. Fourier analysis on transient imaging with a multifrequency time-of-flight camera[C]//2014 IEEE Conference on Computer Vision and Pattern Recognition, June, 23-28, 2014, Columbus, OH, USA, 2014: 3230-3237. [50] Qiao H, Lin J, Liu Y, et al. Resolving transient time profile in ToF imaging via log-sum sparse regularization [J]. Optics Letters, 2015, 40(6): 918-921. doi: 10.1364/OL.40.000918 [51] Heide F, Xiao L, Kolb A, et al. Imaging in scattering media using correlation image sensors and sparse convolutional coding [J]. Optics Express, 2014, 22(21): 26338-26350. doi: 10.1364/OE.22.026338 [52] Wu R H, Suo J N, Dai F, et al. Scattering robust 3D reconstruction via polarized transient imaging [J]. Optics Letters, 2016, 41(17): 3948-3951. doi: 10.1364/OL.41.003948 [53] Wu R H, Adrian J, Suo J N, et al. Adaptive polarization-difference transient imaging for depth estimation in scattering media [J]. Optics Letters, 2018, 43(6): 1299-1302. doi: 10.1364/OL.43.001299 [54] Wu R H, Dai F, Yin D, et al. Imaging through scattering media based on transient imaging technique [J]. Chinese Journal of Computers, 2018, 41(11): 2421-2435. (in Chinese) doi: 10.11897/SP.J.1016.2018.02421 [55] Klose R, Penlington J, Ruckelshausen A. Usability of 3D time-of-flight cameras for automatic plant phenotyping [J]. Image Anal Agric Prod Process, 2011, 69: 93-105. [56] Kazmi W, Foix S, Alenya G, et al. Indoor and outdoor depth imaging of leaves with time-of-flight and stereo vision sensors: Analysis and comparison [J]. ISPRS Journal of Photo-grammetry & Remote Sensing, 2014, 88: 128-146. [57] Dionisio A, José D, César F Q, et al. An approach to the use of depth cameras for weed volume estimation [J]. Sensors, 2016, 16(7): 972. doi: 10.3390/s16070972 [58] Vazquez-Arellano M, Reiser D, Paraforos D S, et al. 3-D reconstruction of maize plants using a time-of-flight camera [J]. Comput Electron Agr, 2018, 145: 235-247. [59] Tsui C L, Schipf D, Lin K R, et al. Using a time of flight method for underwater 3-dimensional depth measurements and point cloud imaging[C]//OCEANS 2014 - TAIPEI, IEEE, 2014. [60] Anwer A, Azhar A S S, Khan A, et al. Underwater 3-D scene reconstruction using kinect v2 based on physical models for refraction and time of flight correction [J]. IEEE Access, 2017, 5: 15960-15970. [61] Digumarti S T, Chaurasia G, Taneja A, et al. Underwater 3D capture using a low-cost commercial depth camera[C]//2016 IEEE Winter Conference on Applications of Computer Vision (WACV), 07-10 March 2016. [62] Jian L, Ju H J, Zhang W F, et al. Review of optical polarimetric dehazing technique [J]. Acta Optica Sinica, 2017, 37(4): 0400001. (in Chinese) doi: 10.3788/AOS201737.0400001 [63] He K M, Sun J, Tang X O, et al. Single image haze removal using dark channel prior [J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2011, 33(12): 2341-2353. doi: 10.1109/CVPRW.2009.5206515 [64] Han J, Yang K, Xia M, et al. Resolution enhancement in active underwater polarization imaging with modulation transfer function analysis [J]. Applied Optics, 2015, 54: 3294-3302. doi: 10.1364/AO.54.003294 [65] Xiao J, Stolkin R, Gao Y, et al. Robust fusion of color and depth data for RGB-D target tracking using adaptive range-invariant depth models and spatio-temporal consistency constraints [J]. IEEE Transactions on Cybernetics, 2018, 48(8): 2485-2499. doi: 10.1109/TCYB.2017.2740952 -

下载:

下载: